Great thanks Timothy! @Ilya_Yusupov - do you think you could cast your eye over MQ issues we are having?

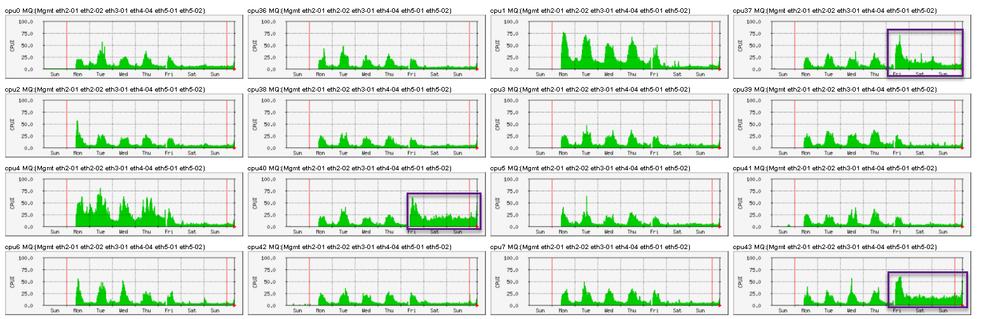

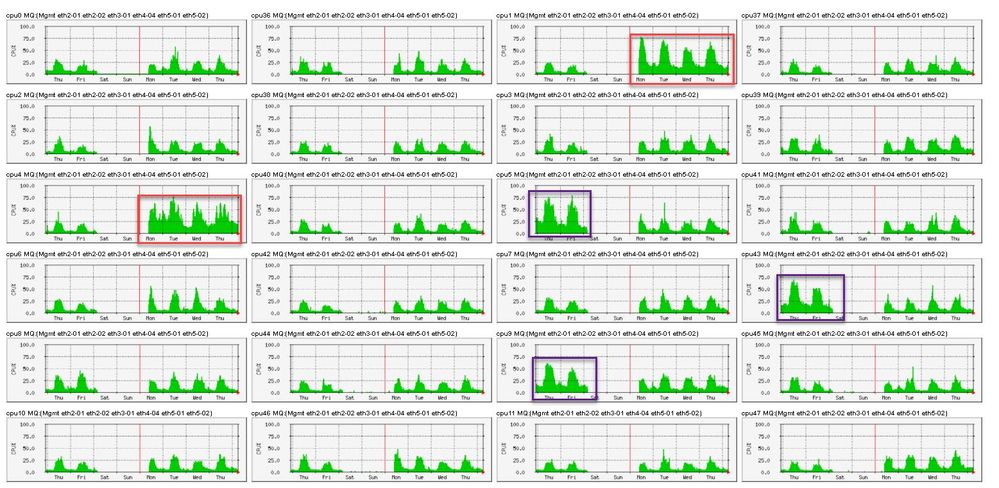

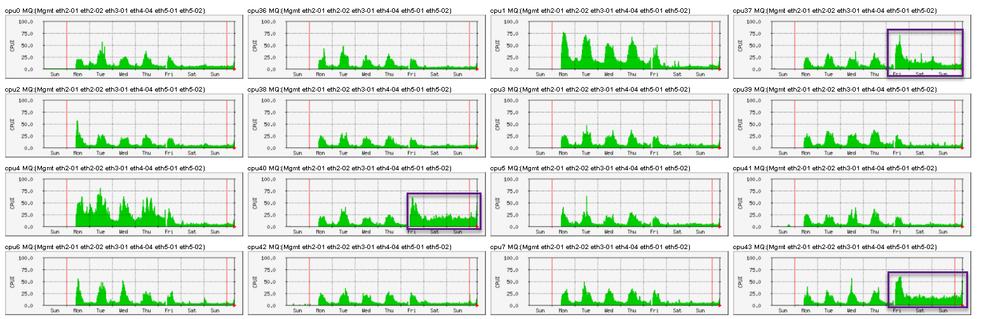

I have actually changed MQ allocation to have 16 cores for 40Gbps interfaces and 8 cores for 10Gbps (instead of all sharing 24)

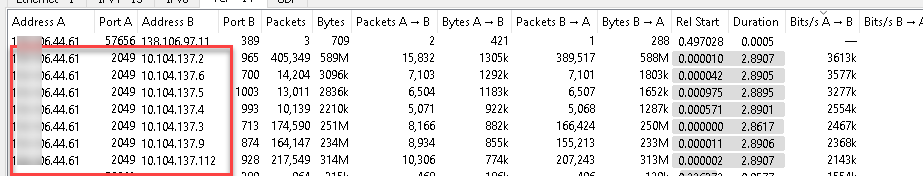

But the result is the same for 40Gbps - I have 3 interfaces taking considerably higher load

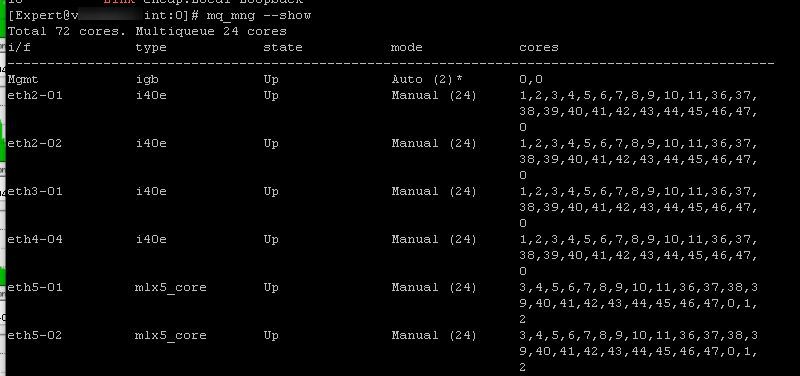

mq_mng --show -vvv

Total 72 cores. Multiqueue 24 cores: 0,36,1,37,2,38,3,39,4,40,5,41,6,42,7,43,8,44,9,45,10,46,11,47

i/f type state mode cores

------------------------------------------------------------------------------------------------

Mgmt igb Up Auto (2)* 0(235),0(1205)

eth2-01 i40e Up Manual (8) 9(878),10(879),11(880),44(881)

,45(882),46(883),47(884),8(885

)

eth2-02 i40e Up Manual (8) 9(960),10(961),11(962),44(963)

,45(964),46(965),47(966),8(967

)

eth3-01 i40e Up Manual (8) 9(533),10(534),11(535),44(536)

,45(537),46(538),47(539),8(540

)

eth4-04 i40e Up Manual (8) 9(384),10(385),11(386),44(387)

,45(388),46(389),47(390),8(391

)

eth5-01 mlx5_core Up Manual (16) 3(322),4(323),5(324),6(325),7(

326),36(327),37(328),38(329),3

9(330),40(331),41(332),42(333)

,43(334),0(335),1(336),2(337)

eth5-02 mlx5_core Up Manual (16) 3(472),4(473),5(474),6(475),7(

476),36(477),37(478),38(479),3

9(480),40(481),41(482),42(483)

,43(484),0(485),1(486),2(487)

* Management interface

------------------------------------------------------------------------------------------------

Mgmt <igb> max 8 cur 2

5e:00.0 Ethernet controller: Intel Corporation I350 Gigabit Network Connection (rev 01)

------------------------------------------------------------------------------------------------

eth2-01 <i40e> max 72 cur 8

af:00.0 Ethernet controller: Intel Corporation Ethernet Controller X710 for 10GbE SFP+ (rev 02)

------------------------------------------------------------------------------------------------

eth2-02 <i40e> max 72 cur 8

af:00.1 Ethernet controller: Intel Corporation Ethernet Controller X710 for 10GbE SFP+ (rev 02)

------------------------------------------------------------------------------------------------

eth3-01 <i40e> max 72 cur 8

3c:00.0 Ethernet controller: Intel Corporation Ethernet Controller X710 for 10GbE SFP+ (rev 02)

------------------------------------------------------------------------------------------------

eth4-04 <i40e> max 72 cur 8

3b:00.3 Ethernet controller: Intel Corporation Ethernet Controller X710 for 10GbE SFP+ (rev 02)

------------------------------------------------------------------------------------------------

eth5-01 <mlx5_core> max 60 cur 16

86:00.0 Ethernet controller: Mellanox Technologies MT27700 Family [ConnectX-4]

------------------------------------------------------------------------------------------------

eth5-02 <mlx5_core> max 60 cur 16

86:00.1 Ethernet controller: Mellanox Technologies MT27700 Family [ConnectX-4]

core interfaces queue irq rx packets tx packets

------------------------------------------------------------------------------------------------

0 eth5-01 eth5-01-13 335 14350733832 14275579034

eth5-02 eth5-02-13 485 14466343063 17243631242

Mgmt Mgmt-TxRx-0 235 78534394 64112480

Mgmt-TxRx-1 1205 67134729 459077031

1 eth5-01 eth5-01-14 336 18381027498 16258562430

eth5-02 eth5-02-14 486 17465071873 15859764318

2 eth5-01 eth5-01-15 337 15268826290 14474595992

eth5-02 eth5-02-15 487 14332288014 47882368284

3 eth5-01 eth5-01-0 322 16870013293 16103167502

eth5-02 eth5-02-0 472 18391705437 16770347908

4 eth5-01 eth5-01-1 323 18341870980 42982282750

eth5-02 eth5-02-1 473 53056458377 18790530142

5 eth5-01 eth5-01-2 324 18092841969 15479879244

eth5-02 eth5-02-2 474 17678594518 17759340911

6 eth5-01 eth5-01-3 325 18569966356 17374626959

eth5-02 eth5-02-3 475 19207199286 16473895178

7 eth5-01 eth5-01-4 326 16371197169 55042906070

eth5-02 eth5-02-4 476 17966599526 16614132655

8 eth2-01 i40e-eth2-01-TxRx-7 885 3741853166 2042916585

eth2-02 i40e-eth2-02-TxRx-7 967 791080580 1834917140

eth4-04 i40e-eth4-04-TxRx-7 391 18 82

eth3-01 i40e-eth3-01-TxRx-7 540 1978429151 2062247563

9 eth2-01 i40e-eth2-01-TxRx-0 878 4125404939 2312180264

eth2-02 i40e-eth2-02-TxRx-0 960 923577271 2950661062

eth4-04 i40e-eth4-04-TxRx-0 384 0 163

eth3-01 i40e-eth3-01-TxRx-0 533 2039654091 1910504944

10 eth2-01 i40e-eth2-01-TxRx-1 879 3708196131 1858716440

eth2-02 i40e-eth2-02-TxRx-1 961 723852572 2140074966

eth4-04 i40e-eth4-04-TxRx-1 385 0 21

eth3-01 i40e-eth3-01-TxRx-1 534 1767080577 2109527000

11 eth2-01 i40e-eth2-01-TxRx-2 880 3415244805 1952244111

eth2-02 i40e-eth2-02-TxRx-2 962 743700658 1828484239

eth4-04 i40e-eth4-04-TxRx-2 386 0 173

eth3-01 i40e-eth3-01-TxRx-2 535 2094555319 2140296068

36 eth5-01 eth5-01-5 327 16564644126 19758585623

eth5-02 eth5-02-5 477 16177811733 17520582730

37 eth5-01 eth5-01-6 328 31472538636 16944805026

eth5-02 eth5-02-6 478 25255422251 21348269114

38 eth5-01 eth5-01-7 329 15736296142 16989047395

eth5-02 eth5-02-7 479 17850328811 17267073118

39 eth5-01 eth5-01-8 330 14548417778 17227449231

eth5-02 eth5-02-8 480 17253608323 15922302163

40 eth5-01 eth5-01-9 331 17246160429 31679890308

eth5-02 eth5-02-9 481 46504867542 24976635307

41 eth5-01 eth5-01-10 332 14887319564 16507515513

eth5-02 eth5-02-10 482 15862234756 17366704751

42 eth5-01 eth5-01-11 333 18121039578 16932088569

eth5-02 eth5-02-11 483 14088983186 14722704253

43 eth5-01 eth5-01-12 334 36432500242 48388767915

eth5-02 eth5-02-12 484 26046626514 16665977419

44 eth2-01 i40e-eth2-01-TxRx-3 881 3719372336 2047174513

eth2-02 i40e-eth2-02-TxRx-3 963 768559972 2026740212

eth4-04 i40e-eth4-04-TxRx-3 387 0 24

eth3-01 i40e-eth3-01-TxRx-3 536 2003820593 1920066858

45 eth2-01 i40e-eth2-01-TxRx-4 882 3478124337 2217071136

eth2-02 i40e-eth2-02-TxRx-4 964 835661452 1880955919

eth4-04 i40e-eth4-04-TxRx-4 388 0 18

eth3-01 i40e-eth3-01-TxRx-4 537 1939612139 2095272331

46 eth2-01 i40e-eth2-01-TxRx-5 883 3146040696 1984205490

eth2-02 i40e-eth2-02-TxRx-5 965 878741983 1986164797

eth4-04 i40e-eth4-04-TxRx-5 389 187164163 18

eth3-01 i40e-eth3-01-TxRx-5 538 1663650364 2043452656

47 eth2-01 i40e-eth2-01-TxRx-6 884 3657784843 1765152235

eth2-02 i40e-eth2-02-TxRx-6 966 824913871 2045747277

eth4-04 i40e-eth4-04-TxRx-6 390 0 541744470

eth3-01 i40e-eth3-01-TxRx-6 539 1773453351 1789497778