Hi community,

in my daily business I am faced with a problem for years now and would like to hear if you guys have a better solution to overcome my problem.

I am running serveral VSX environments with a bunch of virtual systems.

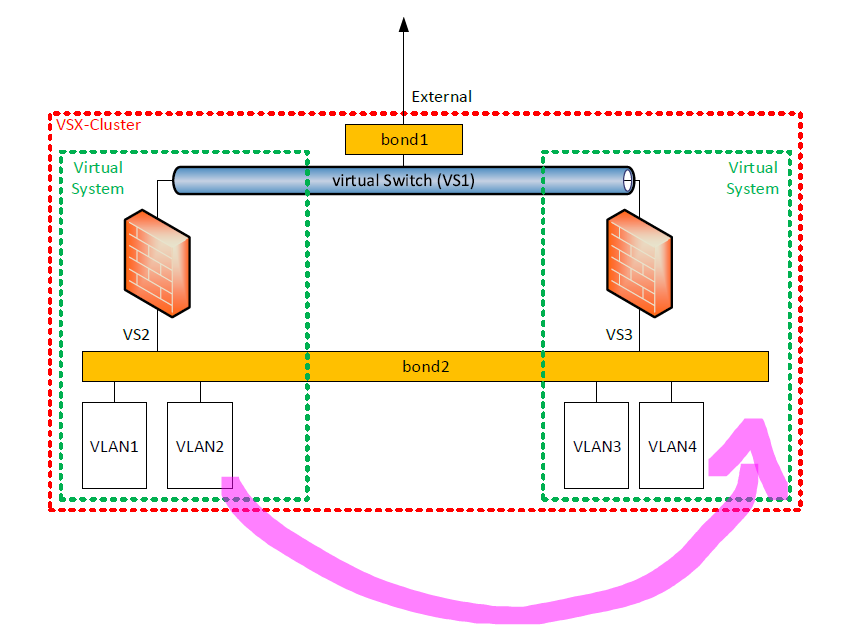

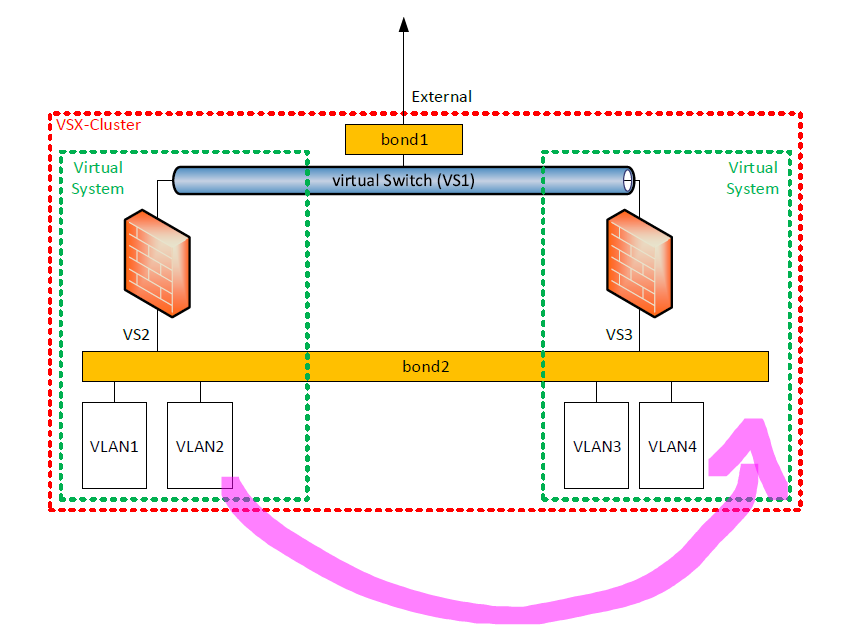

Regularly I have the need to move a VLAN from one virtual system to another virtual system within the same VSX-Cluster.

The VLAN is not connected to a virtual switch as it would too expensive to connect all VLANs to a seperate virtual switch.

All VLANs are behind the same bond interface except the external interface.

Example setup:

example setup

example setup

When moving a VLAN from one virtual system to another within the same VSX-Cluster I am facing the following problems:

You are not allowed to add the same VLAN to multiple virtual systems using the same bond interface.

I consequence I know two possibilities to overcome this problem, but both don't make me happy:

1. Deleting the VLAN on VS2, installing policy on VS2, adding VLAN on VS3, installing policy on VS3.

As VS2 and VS3 are running in the same SmartCenter/Domain this means downtime of minimum 10 minutes.

2. Adding a new physical link to the same Switch, configuring the new VLAN to VS3 with duplicate IP address using the new physical link and moving the VLAN on switch side from the old physical link to the new one.

In this scenario the downtime is acceptable, but you always need two links the the same switch and you a loosing flexibility as you need support of the switch guys.

Moreover in some environements I do not have free interfaces on firewall side so I don't have the possibility to add a second link to the same switch.

Any ideas how to overcome this problem?

The coolest thing would be a nice and smooth solution provided by Check Point.

I started asking Check Point years ago, but didn't get a solution, yet.

Looking forward to read your ideas.

Cheers

Sven