- Products

Network & SASE IoT Protect Maestro Management OpenTelemetry/Skyline Remote Access VPN SASE SD-WAN Security Gateways SmartMove Smart-1 Cloud SMB Gateways (Spark) Threat PreventionCloud Cloud Network Security CloudMates General CloudGuard - WAF Talking Cloud Podcast Weekly ReportsSecurity Operations Events External Risk Management Incident Response Infinity AI Infinity Portal NDR Playblocks SOC XDR/XPR Threat Exposure Management

- Learn

- Local User Groups

- Partners

- More

This website uses Cookies. Click Accept to agree to our website's cookie use as described in our Privacy Policy. Click Preferences to customize your cookie settings.

- Products

- AI Security

- Developers & More

- Check Point Trivia

- CheckMates Toolbox

- General Topics

- Products Announcements

- Threat Prevention Blog

- Upcoming Events

- Americas

- EMEA

- Czech Republic and Slovakia

- Denmark

- Netherlands

- Germany

- Sweden

- United Kingdom and Ireland

- France

- Spain

- Norway

- Ukraine

- Baltics and Finland

- Greece

- Portugal

- Austria

- Kazakhstan and CIS

- Switzerland

- Romania

- Turkey

- Belarus

- Belgium & Luxembourg

- Russia

- Poland

- Georgia

- DACH - Germany, Austria and Switzerland

- Iberia

- Africa

- Adriatics Region

- Eastern Africa

- Israel

- Nordics

- Middle East and Africa

- Balkans

- Italy

- Bulgaria

- Cyprus

- APAC

CheckMates Fest 2026

Join the Celebration!

Quantum SD-WAN Monitoring

Register HereAI Security Masters

Hacking with AI: The Dark Side of Innovation

MVP 2026: Submissions

Are Now Open!

Overlap in Security Validation

Help us to understand your needs better

CheckMates Go:

R82.10 and Rationalizing Multi Vendor Security Policies

Turn on suggestions

Auto-suggest helps you quickly narrow down your search results by suggesting possible matches as you type.

Showing results for

- CheckMates

- :

- Products

- :

- Network & SASE

- :

- Security Gateways

- :

- Re: Troubleshooting performance issues

Options

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

Turn on suggestions

Auto-suggest helps you quickly narrow down your search results by suggesting possible matches as you type.

Showing results for

Are you a member of CheckMates?

×

Sign in with your Check Point UserCenter/PartnerMap account to access more great content and get a chance to win some Apple AirPods! If you don't have an account, create one now for free!

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Troubleshooting performance issues

I'm having issues with a 4200 appliance and it's performance. While these issues have been going for a while, they are becoming quite disruptive lately.

The setup is a 4200 appliance running R80.10 (but also R77.30 had these issues), with only the Firewall, VPN, Identity Awareness, Application & URL filtering blades enabled. The problem is, I have no clue as to what's causing this and my troubleshooting skills are not up to par. I hope you can give me a clue.

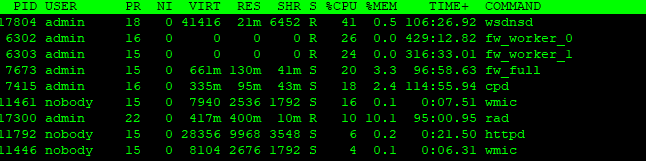

More often than not, I see the gateway's CPU peak to 99%. Sometimes, when I check the top connections with cpview, I see a client downloading a file over https (inspection not enabled) with 60Mbit/s over our WAN connection. While this, in my opinion, shouldn't cause a gateway to max out, I can understand. But other times, I see no visible clue as to why this is. I will hardly see any traffic in cpview, but 'top' gives me an output like the one below.

This is not always the same. Sometimes you see a fw_worker, cpd or pdpd as the #1 CPU user.

fwaccel stat

Accelerator Status : on

Accept Templates : disabled by Firewall

Layer Network disables template offloads from rule #174

Throughput acceleration still enabled.

Drop Templates : enabled

NAT Templates : disabled by Firewall

Layer Network disables template offloads from rule #174

Throughput acceleration still enabled.

NMR Templates : enabled

NMT Templates : enabledAccelerator Features : Accounting, NAT, Cryptography, Routing,

HasClock, Templates, Synchronous, IdleDetection,

Sequencing, TcpStateDetect, AutoExpire,

DelayedNotif, TcpStateDetectV2, CPLS, McastRouting,

WireMode, DropTemplates, NatTemplates,

Streaming, MultiFW, AntiSpoofing, Nac,

ViolationStats, AsychronicNotif, ERDOS,

McastRoutingV2, NMR, NMT, NAT64, GTPAcceleration,

SCTPAcceleration

Cryptography Features : Tunnel, UDPEncapsulation, MD5, SHA1, NULL,

3DES, DES, CAST, CAST-40, AES-128, AES-256,

ESP, LinkSelection, DynamicVPN, NatTraversal,

EncRouting, AES-XCBC, SHA256

fwaccel stats -s

Accelerated conns/Total conns : 243/2697 (9%)

Delayed conns/(Accelerated conns + PXL conns) : 70/1516 (4%)

Accelerated pkts/Total pkts : 170686/2775658 (6%)

F2Fed pkts/Total pkts : 196461/2775658 (7%)

PXL pkts/Total pkts : 2408511/2775658 (86%)

QXL pkts/Total pkts : 0/2775658 (0%)

fwaccel stats -p

F2F packets:

--------------

Violation Packets Violation Packets

-------------------- --------------- -------------------- ---------------

pkt is a fragment 470 pkt has IP options 0

ICMP miss conn 1292 TCP-SYN miss conn 20969

TCP-other miss conn 3018 UDP miss conn 19495

other miss conn 0 VPN returned F2F 0

ICMP conn is F2Fed 9904 TCP conn is F2Fed 121746

UDP conn is F2Fed 18277 other conn is F2Fed 0

uni-directional viol 0 possible spoof viol 0

TCP state viol 2785 out if not def/accl 882

bridge, src=dst 0 routing decision err 1550

sanity checks failed 0 temp conn expired 0

fwd to non-pivot 0 broadcast/multicast 0

cluster message 0 partial conn 1576

PXL returned F2F 11879 cluster forward 0

chain forwarding 0 Tmpl no-match range 5

Tmpl no-match time 0 general reason 6

route change 0 inbound zone change 0

outbound zone change 0

I have cleaned up my rulebase as much as I possibly can right now. Because of the recent upgrade from R77.30 to R80.10 I haven't been able to convert my rulebase to a layered one yet.

How can I find out what's causing these issues?

9 Replies

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

How many rules you have for this guy ?

What is rule #174 which is disabling acceleration ?

Did you try temporary turn off IA or URL filtering to see if you have root cause ?

Kind regards,

Jozko Mrkvicka

Jozko Mrkvicka

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

From memory 4200 has only two CPU cores. Doing simple maths from your screenshot it adds up to 165%. It does not show soft interrupts for SXL. So that would take some usage too. I'm just guessing that your CPUs are maxed out. Check with top (option 1) so you see both core utilisation in detail when it happens. How much idle time you see left on each?

As suggested, try turning off advanced blades (URLF and AC) that pushes traffic to medium path (PXL is 86% in your stats).

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Jozko Mrkvicka Rule #174 is one of the last rules before the default deny. It consists of some RPC services.

Kaspars Zibarts I'm also guessing the CPU is simply maxed out here. That would explain the high percentages of CPU usage for "less obvious" processes I guess?

Turning off AP and IA is not really an option right now, as this has too much impact on the environment. I could try to disable URLF.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

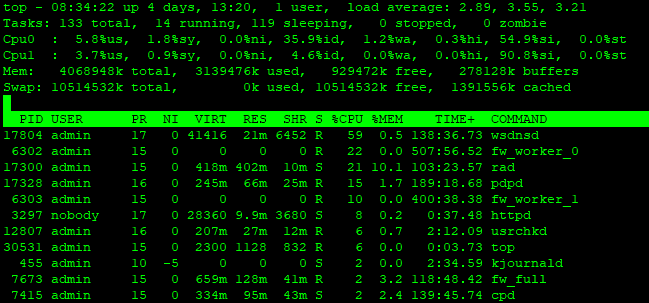

You can see that SXL (traffic arriving on interfaces) is chewing a lot too

therefore you don't have much CPU idle time left there. So my guess would be that these gateways would struggle to cope with slightest traffic peaks due to CPU time shortage. You need to get bigger boxes ![]() You also mentioned that you might be running R80.10 on those. I know 4000 series are supported, but bear in mind that R80.10 is much more CPU and memory hungry compare to R77.30.

You also mentioned that you might be running R80.10 on those. I know 4000 series are supported, but bear in mind that R80.10 is much more CPU and memory hungry compare to R77.30.

Do you have any monitoring tools in place to see CPU history per core? Else just check manually (if possible) when it becomes unstable / unresponsive - see how much idle time is left.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

There are couple of OIDs available for CPU checking via SNMP (per core / overall).

Once you have OID, you can create script which will run every XY seconds/minutes snmpwalk towards desired OID and output save to file.

Some usable links:

How to query utilization of individual CPU cores via SNMP

How to configure SNMP on Gaia OS

Kind regards,

Jozko Mrkvicka

Jozko Mrkvicka

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Thanks for the help guys. I'm going to look into monitoring the individual cores. We have monitoring in place for general CPU usage but upon further inspection, that's doesn't prove to be very useful. Also, the main issue is spikes. Generally speaking, the performance is "okay" (as in, CPU could be consuming 90% but users are not yet affected), until something happens, like a download or a sudden higher amount of connections. The whole thing comes crashing down in flames. ![]() The monitoring hardly ever picked up on these spikes. I'll look into the OID's

The monitoring hardly ever picked up on these spikes. I'll look into the OID's

For now, I have switched back to R77.30, this helps, although we're still very close to the limits of the gateway.

Back to the drawing board, and wait until someone spends the money on "bigger boxes". ![]()

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Yep, been through this cycle few times ![]() not the strongest point for CP - HW useage increases with every release. But there's always two sides to the coin - you get a lot of new features.

not the strongest point for CP - HW useage increases with every release. But there's always two sides to the coin - you get a lot of new features.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

If you know exact time of the spike, you can use SmartLog to found out what was going high during that time. SmartLog has some nice statistics available ![]()

Another way is cpview history (if you have enabled that), but for that I dont know how to check stats from past. Maybe using sql ?

Kind regards,

Jozko Mrkvicka

Jozko Mrkvicka

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

You can simply use the command cpview -t to go to the oldest cpview moment in history and scroll through time per minute with the + and - keys on your keyboard. For more specificity you can also use the syntax cpview -t 03 Sept 2019 09:05 for example to search for an exact time and then still scroll through the timeline with the + and - keys.

Leaderboard

Epsum factorial non deposit quid pro quo hic escorol.

| User | Count |

|---|---|

| 16 | |

| 12 | |

| 9 | |

| 8 | |

| 5 | |

| 5 | |

| 5 | |

| 4 | |

| 4 | |

| 4 |

Upcoming Events

Thu 22 Jan 2026 @ 05:00 PM (CET)

AI Security Masters Session 2: Hacking with AI: The Dark Side of InnovationTue 27 Jan 2026 @ 11:00 AM (EST)

CloudGuard Network Security for Red Hat OpenShift VirtualizationThu 22 Jan 2026 @ 05:00 PM (CET)

AI Security Masters Session 2: Hacking with AI: The Dark Side of InnovationTue 27 Jan 2026 @ 11:00 AM (EST)

CloudGuard Network Security for Red Hat OpenShift VirtualizationThu 26 Feb 2026 @ 05:00 PM (CET)

AI Security Masters Session 4: Powering Prevention: The AI Driving Check Point’s ThreatCloudAbout CheckMates

Learn Check Point

Advanced Learning

YOU DESERVE THE BEST SECURITY

©1994-2026 Check Point Software Technologies Ltd. All rights reserved.

Copyright

Privacy Policy

About Us

UserCenter