Hi

I'm noticing some strange CPU usage on our gateway since upgrade to R80.30 3.10 (currently on JHF T50). The hardware was also changed at the same time (HP DL360 Gen10). We have an 8 core license.

The first thing I noticed was the weird CPU allocation for CoreXL and SecureXL. We have the gateway configured with 6 CoreXL cores, and 2 for SecureXL. In previous version (80.10) CPU's 2-7 were assigned for CoreXL and 0 and 1 to SecureXL. Now, the distribution is something I'm not used to.

# fw ctl affinity -l -r

CPU 0: eth8

CPU 1:

CPU 2:

CPU 3: fw_5

mpdaemon fwd wsdnsd usrchkd in.asessiond in.acapd vpnd pepd lpd rad pdpd topod cprid cpd

CPU 4: fw_3

mpdaemon fwd wsdnsd usrchkd in.asessiond in.acapd vpnd pepd lpd rad pdpd topod cprid cpd

CPU 5: fw_1

mpdaemon fwd wsdnsd usrchkd in.asessiond in.acapd vpnd pepd lpd rad pdpd topod cprid cpd

CPU 6:

CPU 7: eth9 eth4

CPU 8:

CPU 9: fw_4

CPU 10: fw_2

CPU 11: fw_0

All:

The current license permits the use of CPUs 0, 1, 2, 3, 4, 5, 6, 7 only.

What has changed in R80.30 that CoreXL is using cores 3,4,5,9,10,11? Is it trying to evenly distribute load between physical CPU's? Why was SecureXL affinity set to cores 0 and 7 by default (why not 0 and 6)? I configured manual affinity using the default values.

Also, CoreXL instances don't seem to balance load as nicely as in R80.10.

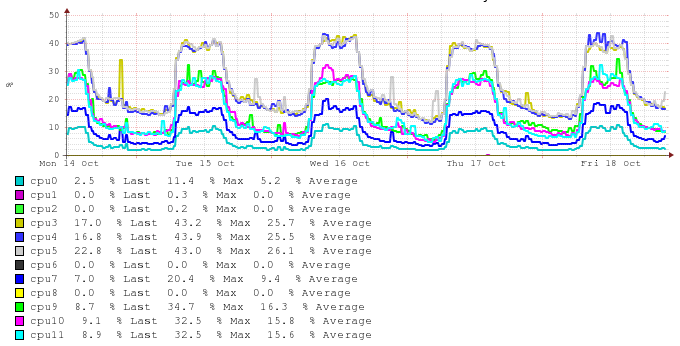

This was the CPU usage in R80.10:

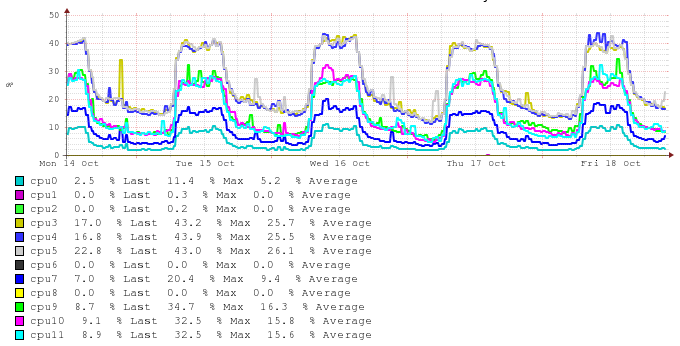

And this is R80.30

As you can see cores 3-5 are utilized much more than cores 9-11. I presume this is because default affinity for processes is set only to cores 3-5. Why is that? Is that a feature or a bug?

Is there a simple way to persuade the process affinity to be on all CoreXL cores without manually setting the affinity in fwaffinity.conf for every possible process?

Best regards