- Products

- Learn

- Local User Groups

- Partners

- More

This website uses Cookies. Click Accept to agree to our website's cookie use as described in our Privacy Policy. Click Preferences to customize your cookie settings.

- Products

- AI Security

- Developers & More

- Check Point Trivia

- CheckMates Toolbox

- General Topics

- Products Announcements

- Threat Prevention Blog

- Upcoming Events

- Americas

- EMEA

- Czech Republic and Slovakia

- Denmark

- Netherlands

- Germany

- Sweden

- United Kingdom and Ireland

- France

- Spain

- Norway

- Ukraine

- Baltics and Finland

- Greece

- Portugal

- Austria

- Kazakhstan and CIS

- Switzerland

- Romania

- Turkey

- Belarus

- Belgium & Luxembourg

- Russia

- Poland

- Georgia

- DACH - Germany, Austria and Switzerland

- Iberia

- Africa

- Adriatics Region

- Eastern Africa

- Israel

- Nordics

- Middle East and Africa

- Balkans

- Italy

- Bulgaria

- Cyprus

- APAC

MVP 2026: Submissions

Are Now Open!

What's New in R82.10?

Watch NowOverlap in Security Validation

Help us to understand your needs better

CheckMates Go:

Maestro Madness

Turn on suggestions

Auto-suggest helps you quickly narrow down your search results by suggesting possible matches as you type.

Showing results for

- CheckMates

- :

- Products

- :

- Network & SASE

- :

- Security Gateways

- :

- Adventures in Clustering

Options

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

Turn on suggestions

Auto-suggest helps you quickly narrow down your search results by suggesting possible matches as you type.

Showing results for

Are you a member of CheckMates?

×

Sign in with your Check Point UserCenter/PartnerMap account to access more great content and get a chance to win some Apple AirPods! If you don't have an account, create one now for free!

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Adventures in Clustering

“It’s a dangerous business, Frodo, setting up a lab. You step onto that cyber road, and if you don’t keep your feet, there’s no knowing where you might be swept.” - Gandalf

Hello friends. Today we are stepping out into the unknown, on a wild adventure in borderlands of what is possible with ClusterXL.

I have setup a lot of clusters in my time, from files servers to load balancers to firewalls, a wide variety of deployments from all sorts of vendors. However, in most of these cases, the nature of my job role and the limitations of budgets meant that I usually had to focus on the basics: standardized configs and quick deployments. There wasn't time to experiment or test out what might be possible, we had to get it up and running as fast as possible, and as simple as possible.

One of the blessings in my current role is that now I do have time, and support, to go farther and try new things and really understand the art of what is possible. So when I got a chance recently to play around in advanced lab with many networks, multiple gateways, and over a dozen VMs, well I realized this was the time to push some boundaries and see what kind of an adventure we could have.

Then it came to me; awhile back I came across a reference that it is possible to convert an failover cluster to a Load Sharing cluster without having to rebuild anything… Now, if you came up in IT as a Check Point guy, this might seem trivial to you. Maybe the true Check Point wizards have known all along, but as someone who learned clustering on the other platforms, well this sounds crazy! Change the type and function of a cluster without having to tear the whole thing down and rebuild it from scratch? What sort of magic is this?

I did a little digging through the scrolls and SK articles but there isn't much documentation to be found about how to harness this power, (probably due in part to some of the limitations of Load Sharing mode) , but knowing it was possible sparked my curiosity and now I found myself with the perfect setup to test it out.

Some foundational concepts

Before we hit the road it is important to pack our bags and make sure we are prepared. Here are some key items it will be helpful to be familiar with:

A review of Check Point Cluster Types.

High Availability mode (HA or A/S)

The traditional approach most often seen in firewall clusters. In this type of cluster only one node is active at any given time. The second node sits in a passive or standby state and only starts handling traffic in the event of a fail over.

Load Sharing mode (LS)

This is a proprietary approach provide by Check Point. This setup allows the pair of gateways to share the load of traffic, balanced across the gateways so that both nodes are active. The cool thing here is that it will still use a single shared virtual IP (VIP). This simplifies the network side of things considerably. This mode has two variations that behave slightly differently.

LS - Multicast mode

As you may have guessed, uses multicast addressing. Each member receives traffic packets and then runs algorithms to determine which node will handle each packet. This mode will give you a roughly 50/50 traffic split.

LS - Unicast mode

Can't do multicast in your environment, no problem. In this mode the primary gateway will function as a "pivot", receiving traffic requests as they come in and handing off some of those connections to the secondary node. This will result in a roughly 70/30 traffic split.

Active/Active Mode (A/A)

This is similar to traditional A/A clusters from other vendors. Both gateways are active and synchronize connections to provide availability in the event of a failure but there is no load sharing or balancing. Typically they will have unique IPs and rely on dynamic routing updates to achieve failover. Not part of today's scenario, so not going to elaborate on this mode.

Link to good resource if you are interested in basics of setting up an HA cluster:

https://sc1.checkpoint.com/documents/R81/WebAdminGuides/EN/CP_R81_ClusterXL_AdminGuide/

Another good resource, this one on how to convert an existing gateway to an HA cluster:

Keep in Mind: Different types of clusters will have different advantages or limitations. One limitation of note is you cannot land IPSec VPNs on a LS cluster, so keep that in mind if you are considering this for a production environment.

Link to SK on Load Sharing Limitations:

https://support.checkpoint.com/results/sk/sk101539

Let the Adventure Begin

Here is a quick drawing of the section of the lab I carved out for this test:

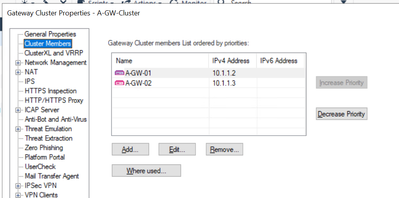

Everything is running R81.20

Gateways 01 and 02 are already setup as an A/S Failover cluster (HA mode), using ClusterXL and VMAC.

Our basic configuration details:

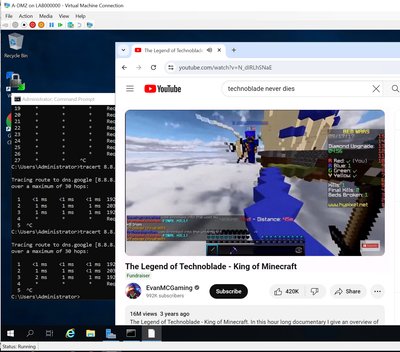

So with the lab up and running, it is time to generate some traffic so we can see which gateways are actively handling traffic. Spinning up a browser in both my server VMs I started some classic YouTube streams.

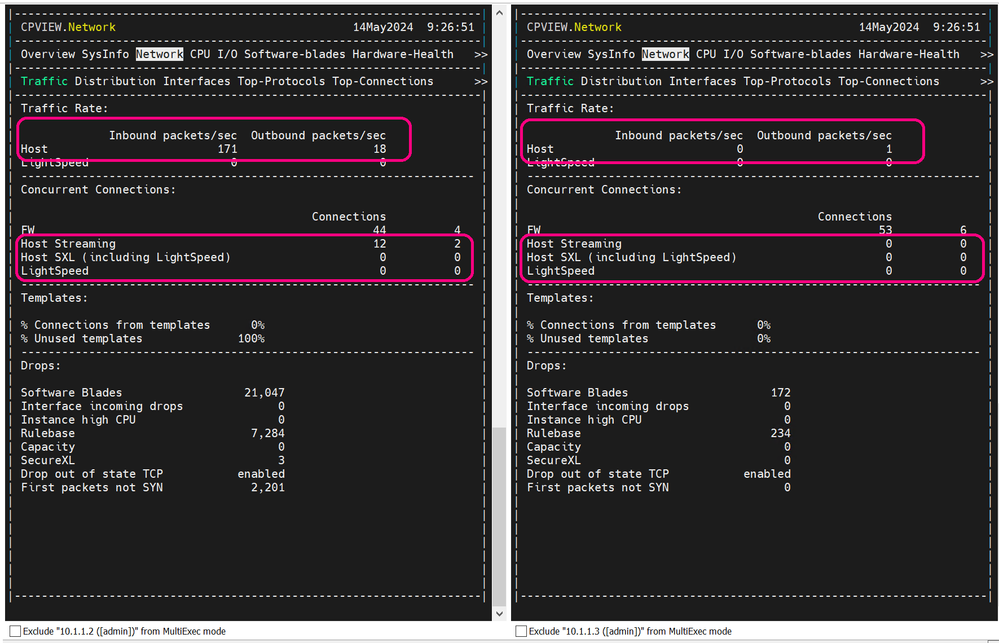

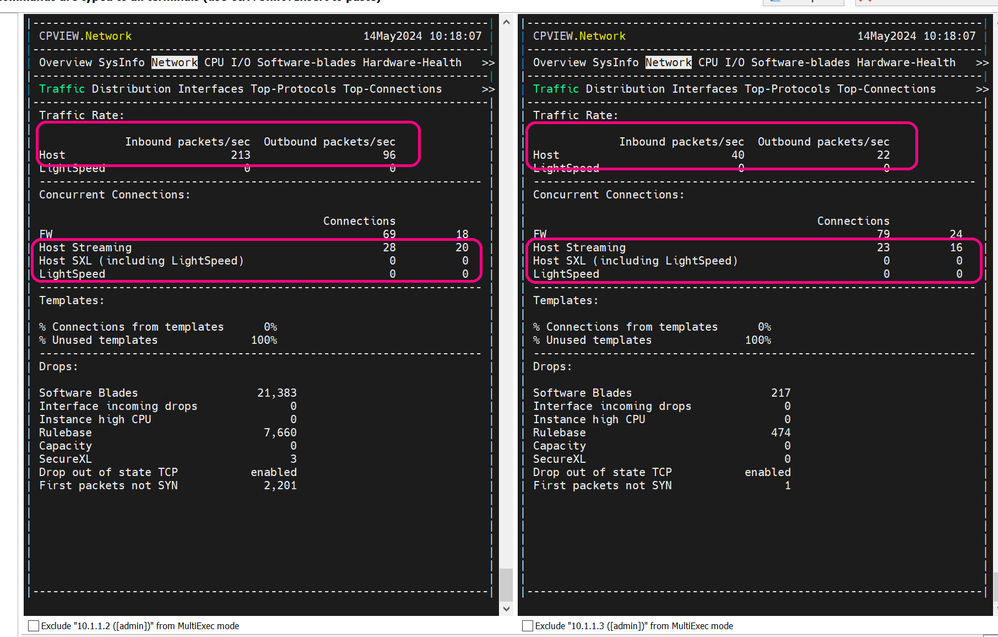

Using MobaXterm I connected out via SSH to my gateways launched cpview to take a look at how the traffic is distributed.

(By the way, if you are not familiar with it, play around with MultiExec mode, it is super handy.)

As you can see Gateway01 is handling virtually all the traffic, which is what we expect in HA mode.

Stepping into the unknown

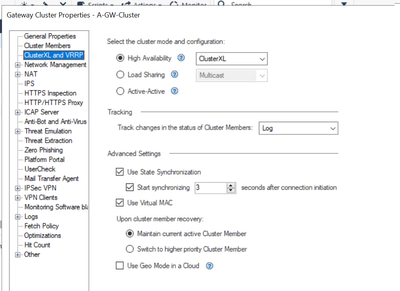

Now as I said, the cool thing about LS is that is still uses the same VIP as the current HA setup. So if I currently have an HA cluster working and I can fail over to the secondary node, then everything should "just work" from a network perspective when I switch modes. I tested this in my lab without issue and just in case you are wondering, did this whole exercise without ever connecting the virtual switches/routers or making any network changes.

That being said, keep in mind that ARP tables on networks are mysterious enigmas that store values in quasi-quantum states next to Schrodinger's cat, so while there was virtually no traffic disruption in my tests, your mileage may vary.

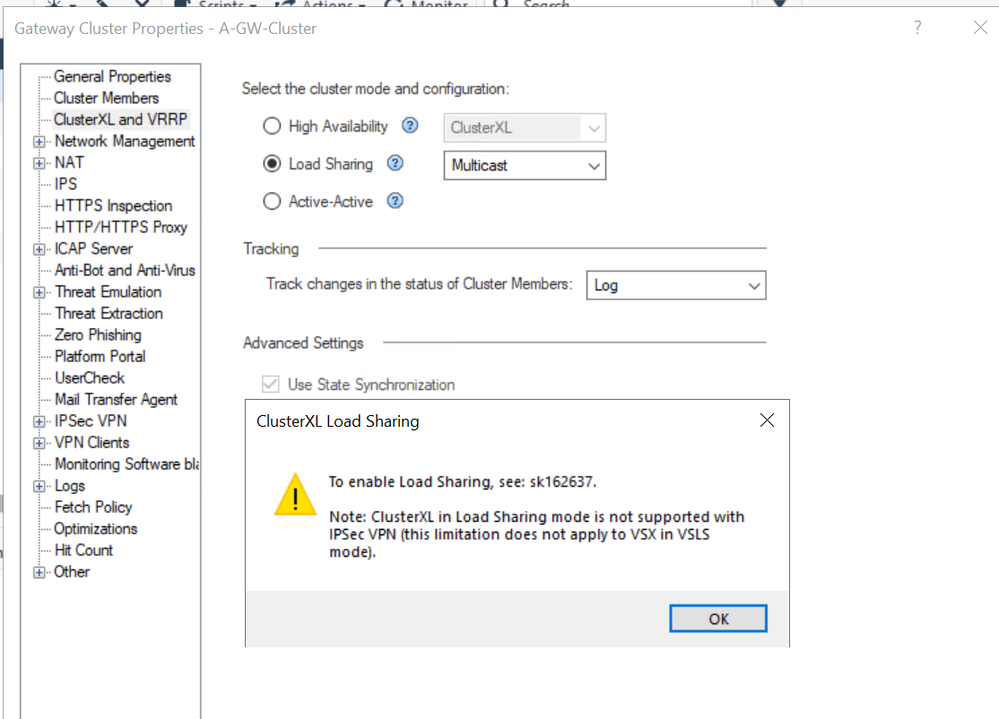

So with my lab prepped and my backups taken, I hopped into smart console to try flipping the switch on the cluster definition to see what happens.

Error Note: ClusterXL in Load Sharing mode is not supported with IPSEC VPN

Drats. I was immediately denied. Now, don't be deceived by this pop up warning. (I had to read it twice.) It can be confusing because it mentions IPSec VPN and then when you click OK, you are switched back to HA mode. This is Not failing because an IPSec VPN is present. (My lab setup doesn't have any) This is just the primary limitation the system wants to make you aware of. It is programmed by default with a speed bump of sorts here in the GUI.

So while it is not a configuration issue, what we do have is something strange. The SK article gives you all the details on how to fix it but not the why this is in place. Basically, for some reason we have an extra protection built into both the Smart Console and the Management Server itself. This means that by default, with no customization, you cannot turn on LS mode! (This is true even if you build it from scratch.)

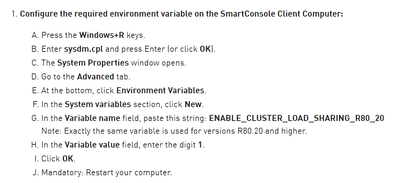

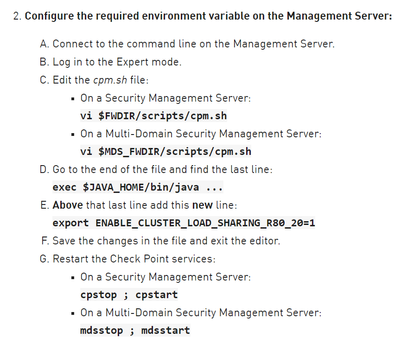

The way around this is fairly simple, if annoying. You have to create a variable on the computer running the Smart Console and update a script value on the management server. Nothing major, and no permanent damage done if you make these changes, just a hoop we have to jump through as a prerequisite. Consider it a test of character to keep out those faint hearted adventures who lack the courage to launch vi.

Link to the details:

https://support.checkpoint.com/results/sk/sk162637

Key points:

AND

Yes I know what you are thinking "Shouldn't I change that value to match my version, say R81_20 in this case?" The answer is No. It is forever locked in time at the version level it was created for as a memorial to forgotten code snippets that will never be updated.

The entire experience here, from the GUI pop-up to the fix just leaves you shaking your head. I don't have access to source but if I did I imagine I would find a comment in this section similar to: "Remember to fix this later"

Anyway, after a short detour I finished prepping my system according to the SK article, I went back to try again.

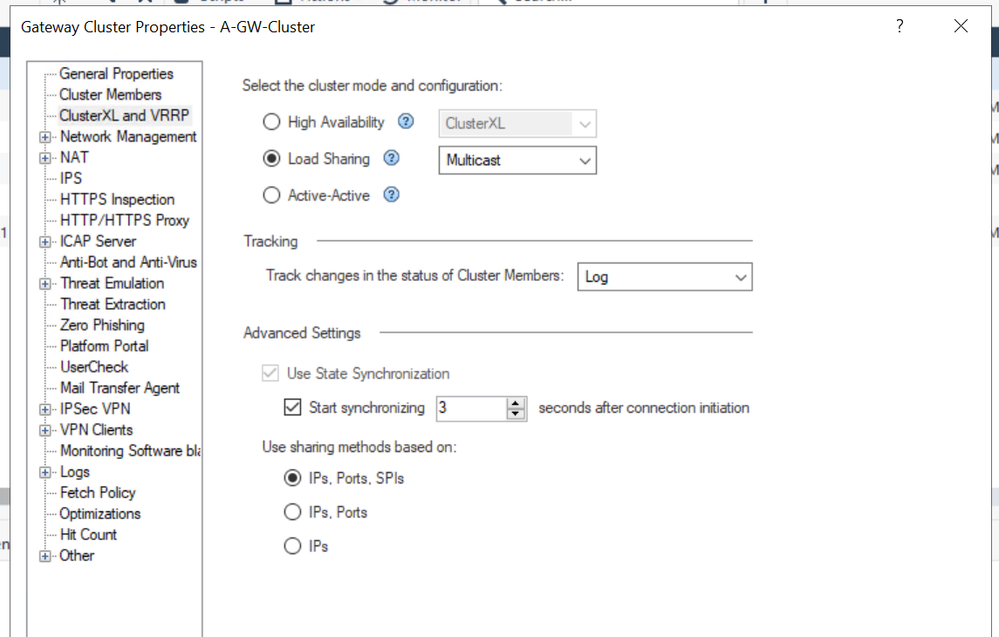

Boom, now we are talking!

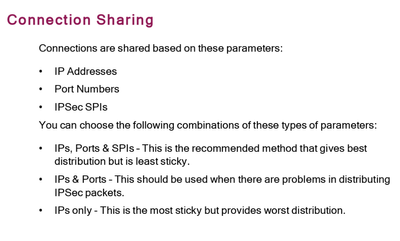

Note: For my test I went with Multicast mode for the 50/50 traffic distribution. I also selected the IP/Port/SPI connection sharing method. This has to do with which components of each connection request that will be used to calculate which gateway will own it. Rule of thumb here is more components considered the better the traffic distribution, but the lower the overall stickiness of connections. So if stickiness is more important to you than evenly balancing the load, you might pick IP only.

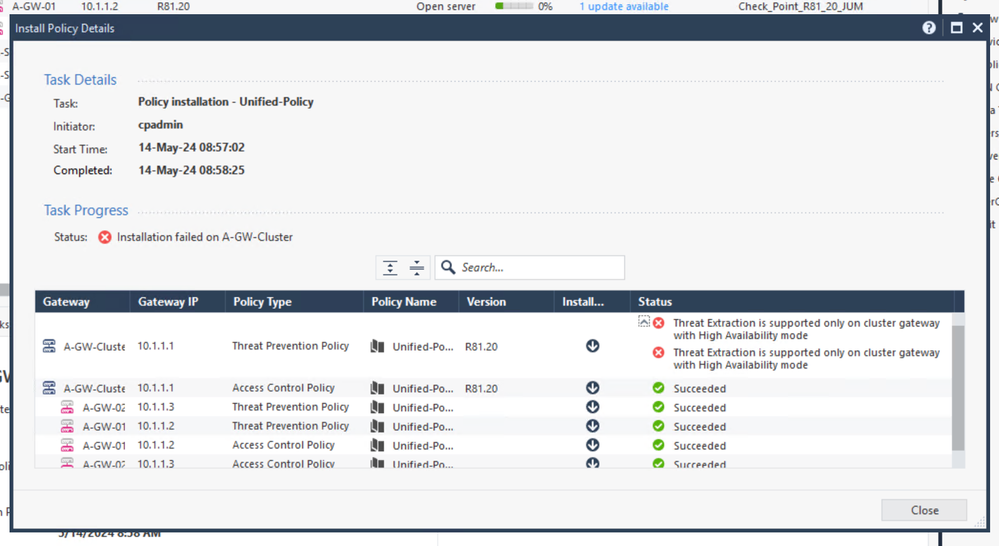

Right, well that was easy. Time to push policy…

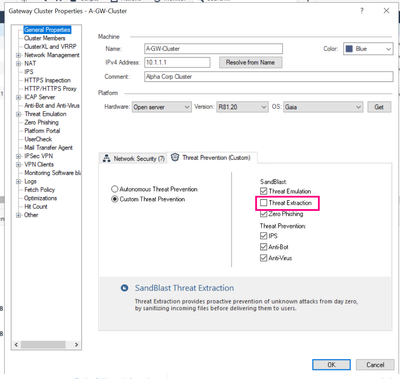

Oops, looks like I stumbled over another limitation of LS mode. Simple enough to resolve in a test environment. Popped back into the cluster object and switched from Autonomous Threat Prevention to Custom so I could turn off Threat Extraction.

Note: Threat Extraction is an awesome protection feature and I don't recommend running without it in production environments. More info here:

https://support.checkpoint.com/results/sk/sk114807

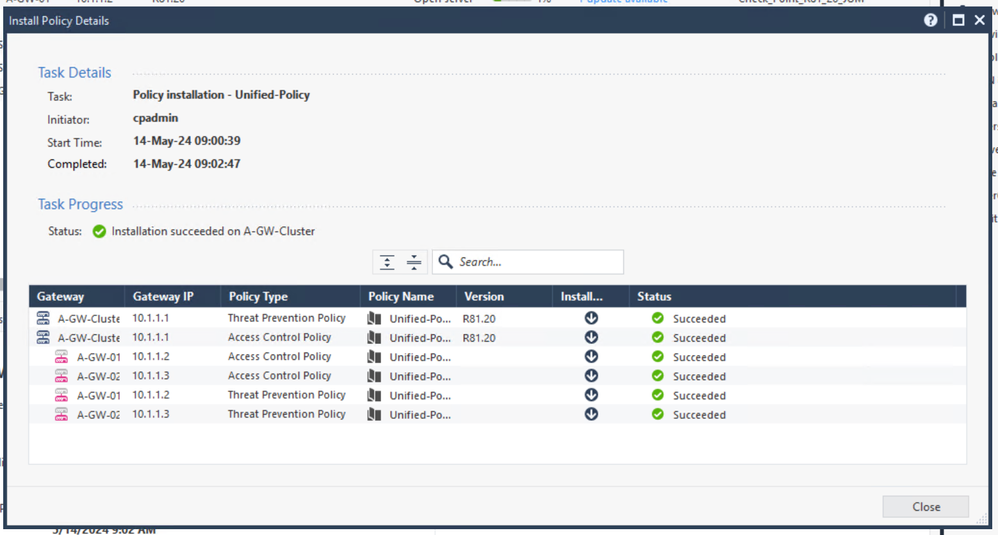

Then we try again:

That's better.

Went back to my test VM's, made sure internet access still worked, everything was good. Videos were still running as if nothing happened. To be safe, I relaunched my browsers and started up the streams again. Then reconnected to my gateways to see how my traffic is being handled.

Very nice! Now we have traffic seamlessly balanced across both gateways.

I even went back in and switched it back and forth between LS/AP a couple times, really just a matter of pushing policy. The magic is real! Lol. I will say that eventually my border router got mad (probably an ARP issue) and did start dropping traffic until I rebooted it, but overall really impressed with how easy it is to switch between these modes.

By the way if you think this is cool, you are going to love the new ElasticXL coming in R82!

https://community.checkpoint.com/t5/General-Topics/R82-ElasticXL/td-p/192459

Thanks for tagging along, hope you had fun. Safe travels out their my fellow cyber adventurers.

Labels

- Labels:

-

Appliance

-

ClusterXL

-

CPView

-

Open Server

5 Replies

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Wow...FANTASTIC!!

Andy

Best,

Andy

Andy

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

The reason we block Load Sharing in SmartConsole is that in some R80.x versions, Load Sharing with IPSec VPN isn't supported.

A bunch of changes were needed to incorporate the Maestro code into maintrain that broke Load Sharing with VPN starting in R80.20.

This is fixed in currently supported versions.

However, the "bump in the road" is still present.

Thanks for sharing this!

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I always found HA was way better option, even in older versions.

Best,

Andy

Andy

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

In older versions, ClusterXL Load Sharing also had a lot more limitations to it.

The Cluster Correction Layer (developed for Scalable Platforms/Maestro) solved a lot of those issues: https://support.checkpoint.com/results/sk/sk169154

Implementing CCL "broke" ClusterXL Load Sharing with VPN in R80.x versions.

This has been fixed in R81.10 (or R81 JHF 34).

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Thank you, Great article!

Haven't seen LS much in the wild.

Looking forward to elasticXL getting some more adoption.

Leaderboard

Epsum factorial non deposit quid pro quo hic escorol.

| User | Count |

|---|---|

| 20 | |

| 19 | |

| 18 | |

| 8 | |

| 7 | |

| 3 | |

| 3 | |

| 3 | |

| 3 | |

| 3 |

Upcoming Events

Tue 16 Dec 2025 @ 05:00 PM (CET)

Under the Hood: CloudGuard Network Security for Oracle Cloud - Config and Autoscaling!Thu 18 Dec 2025 @ 10:00 AM (CET)

Cloud Architect Series - Building a Hybrid Mesh Security Strategy across cloudsTue 16 Dec 2025 @ 05:00 PM (CET)

Under the Hood: CloudGuard Network Security for Oracle Cloud - Config and Autoscaling!Thu 18 Dec 2025 @ 10:00 AM (CET)

Cloud Architect Series - Building a Hybrid Mesh Security Strategy across cloudsAbout CheckMates

Learn Check Point

Advanced Learning

YOU DESERVE THE BEST SECURITY

©1994-2025 Check Point Software Technologies Ltd. All rights reserved.

Copyright

Privacy Policy

About Us

UserCenter