- Products

- Learn

- Local User Groups

- Partners

- More

This website uses Cookies. Click Accept to agree to our website's cookie use as described in our Privacy Policy. Click Preferences to customize your cookie settings.

- Products

- Learn

- Local User Groups

- Upcoming Events

- Americas

- EMEA

- Czech Republic and Slovakia

- Denmark

- Netherlands

- Germany

- Sweden

- United Kingdom and Ireland

- France

- Spain

- Norway

- Ukraine

- Baltics and Finland

- Greece

- Portugal

- Austria

- Kazakhstan and CIS

- Switzerland

- Romania

- Turkey

- Belarus

- Belgium & Luxembourg

- Russia

- Poland

- Georgia

- DACH - Germany, Austria and Switzerland

- Iberia

- Africa

- Adriatics Region

- Eastern Africa

- Israel

- Nordics

- Middle East and Africa

- Balkans

- Italy

- Bulgaria

- Cyprus

- APAC

- Partners

- More

- ABOUT CHECKMATES & FAQ

- Sign In

- Leaderboard

- Events

AI Security Masters E7:

How CPR Broke ChatGPT's Isolation and What It Means for You

Blueprint Architecture for Securing

The AI Factory & AI Data Center

Call For Papers

Your Expertise. Our Stage

Good, Better, Best:

Prioritizing Defenses Against Credential Abuse

Ink Dragon: A Major Nation-State Campaign

Watch HereCheckMates Go:

CheckMates Fest

Turn on suggestions

Auto-suggest helps you quickly narrow down your search results by suggesting possible matches as you type.

Showing results for

- CheckMates

- :

- Products

- :

- General Topics

- :

- Your firewall is on fire

Options

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

Turn on suggestions

Auto-suggest helps you quickly narrow down your search results by suggesting possible matches as you type.

Showing results for

Are you a member of CheckMates?

×

Sign in with your Check Point UserCenter/PartnerMap account to access more great content and get a chance to win some Apple AirPods! If you don't have an account, create one now for free!

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Your firewall is on fire

So there you sit in your comfy chair and drink your morning coffee, sun is shining and then suddenly boom

You put away your coffee and start investigating. What on earth is happening? Why is your CPU cores suddenly spiking so high? Are you under attack? One user or many users causing this? Where do you start investigating? What commands, tools or views do you use? Can we have a discussion where people share what they do in situations like this when it suddenly happens? Something like the top 3 CLI commands. Share your top 3 investigating steps.

You thought your firewall was tuned, didn't you ![]()

- Tags:

- firwallonfire

13 Replies

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Yeah this could happen easilly. I believe that you'll see many ways what you can check.

My personal checks are:

- top - for check if just any other stucked process consuming CPUs. Time to time could even CLISH instance freeze and start killing CPU.

- fwaccel stat - is acceleration fine? Have you got drop templates enabled? I have experience that the SecureXL could turn off itself because of error counter in it. We hit this already twice in production (always with big impact) and general fix not exist yet.

- fw ctl pstat - see counters, watemarks and connection limits

- fw tab -t connections -s - again checking connection number and see if it reached limit for example

- fwaccel conns | awk '{printf "%-16s %-16s %-10s\n", $1,$3,$4}' | sort | uniq -c | sort -n -r | head -n 50 - to see top 50 connections when acceleration is running

fw tab -u -t connections |awk '{ print $2 }'|sort -n |uniq -c|sort -nr|head -50 - to see 50 top connections according connection table

- check /var/log/messages and core dump folder - just for sure

- check interfaces counters and related switch interfaces utilization

- try cpview, Smart View Monitor or other monitoring tool - to see if it could be connected to interface utilization

Of course could be much more, but it depends on first finding results. I hope that other guys will share more interesting commands/hints here.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

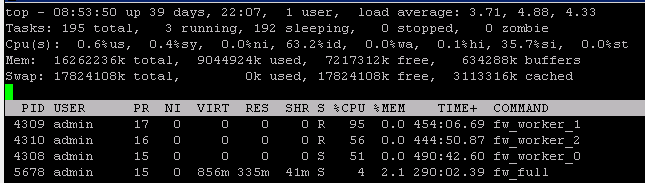

Petr Hantak had some excellent suggestions, to dig in a little deeper you need to determine which specific type of CPU execution is tying up the CPU; this will give you some important clues about where to focus your efforts The best tool for this is running top in real-time while the event is occurring, sar can also be used in historical mode but it rolls up the sy/si/hi/st values shown in top into a single figure (%system) which can obscure where the issue is occurring. top can be run in batch mode to catch intermittent spikes which is covered in my book.

So if you run top look at the us/sy/ni/id/wa/hi/si/st values which are listed below along with hints about how to proceed if that particular value is the high one:

us - Consumption by processes, should be fairly low on a gateway unless there are features enabled such as HTTPS Inspection which cause "process space trips" on the firewall; this effect and what you can do about it is extensively covered in the second edition of my book. fwd or its buddies can definitely be a culprit here if the gateway logging rate is extremely high as well. Note that fw_worker_X CPU execution is NOT counted here, even though they look like processes, see sy below.

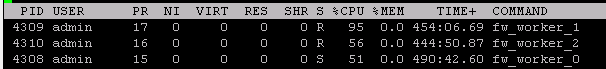

sy - CPU consumption processing traffic in the Firewall (F2F) and Medium (PXL) paths, fw_worker_X CPU usage is usually counted here. The fw_worker_X "processes" shown in top are simply representations of the firewall workers down in the kernel and not really processes in the traditional sense, in some cases CPU usage by fw_worker_X "processes" will appear under si, see below.

ni - Execution by processes that have had their process CPU priority lowered (nice'd), irrelevant on a gateway but important on an SMS.

id - Idle time, hopefully self explanatory.

wa - Percentage of time a CPU was blocked (unable to do anything) waiting for an I/O event to occur (usually hard drive access). Anything higher than 5% here (unless policy is currently being installed) is probably a low free memory situation on a gateway, use free -m to investigate further. Any nonzero swap usage may indicate the need for more RAM or the presence of a runaway process consuming excessive amounts of memory.

hi - Percentage of CPU time processing hardware interrupts, on a gateway this is almost all the transfer of packets from the NIC hardware buffers into RAM memory (ring buffer). An excessive value here could indicate extremely high packet rates traversing the firewall or possibly a NIC hardware/driver issue.

si - Soft Interrupts, SoftIRQ processing (i.e. emptying the ring buffer and sending the packets up for inspection) AND the handling of fully-accelerated traffic in the Accelerated path (SXL). If this value is high and your cores allocated to SND/IRQ functions are getting slammed, you may need to reduce the number of Firewall Worker cores so that more SND/IRQ cores can be allocated.

st - Steal - Percentage of CPU cycles requested but denied by the Hypervisor. On a bare-metal firewall (i.e. non VSec/VE) this should always be zero.

--

Second Edition of my "Max Power" Firewall Book

Now Available at http://www.maxpowerfirewalls.com

New Book: "Max Power 2026" Coming Soon

Check Point Firewall Performance Optimization

Check Point Firewall Performance Optimization

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

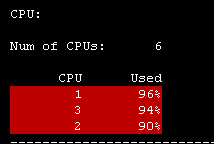

Appreciate the thorough explanation of the top command result related to the gateway performance. While I didn't catch a screenshot of the top result while they were at peak, here is the rest of screenshot from the screenshot above:

It looks for me that it was a lot of Windows update causing it, probably at same time. Traffic to internal WSUS 234 GB and towards Internet 106 GB for today from Smartview high bandwidth application.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

And depending on your investigations, following Petr Hantak and Tim Hall indications, and if your environment evolved. You might end up running a cpsizeme to check whether your firewalls are still suitable for that environment.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi Tim,

Thanks for sharing detailed explanation of TOP command

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

If you don't want to dig too deep the following tools are also pretty helpful in giving a quick advice of possible root causes:

Healthcheck-Tool: How to perform an automated health check of a Gaia based system

CP-Monitor: Traffic analysis using the 'CPMonitor' tool

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

In addition to the top command, using pstree is very useful as well, to see which process is called by which parent.

and now to something completely different - CCVS, CCAS, CCTE, CCCS, CCSM elite

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I would start investigation with sxl , top connections, counters limitations, messages.

fwaccel stats >

- Displays SecureXL acceleration statistics

cat /proc/ppk/stats >

- Displays total number of packets that passed through interface

cat /proc/ppk/drop_statistics >

- Displays SecureXL drop statistics

cpview >

- Displays the CPU utilization (and many other counters)

cat /proc/interrupts >

- Displays the number of interrupts on each CPU core from each IRQ

fw ctl pstat >

- Displays FireWall internal statistics about memory and traffic

netstat -ni >

- Displays a table of all network interfaces

sar [-u] [-P { <cpu> | ALL }] [interval_in_sec [number_of_samples]]

- Displays information about CPU activity, network devices, memory, paging, block IO, etc.

you can also check sk109236.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I am going to put this in General Product Topics where it belongs.

Love the thread, keep it going!

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Dameon, how would your approach to a situation like this be? I think it's interesting for us all to hear that.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Y'all have covered most of the things I'd try ![]()

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I'm curious what version you are running. We are running R77.30, and just recently turned on CoreXL Dynamic Dispatcher (sk105261). It is on by default in R80.10.

We went through some of these steps trying to figure out what was causing the spike. Turns out it was one of our partners uploading/downloading content, consuming 100% of a cpu core. The good thing that come from this incident was the discovery of CoreXL Dynamic Dispatcher, and Priority Queuing that comes with it (sk105762). Since enabling these two SK's, cpu utilization on an individual core does reach 100%, but it does not stay there. Traffic is sent to other cores that are not as busy, spreading the load out.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi,

I am adding what was not covered, look at history utilization by SAR or CPVIEW -t (history), try to find some spikes and look at traffic at each interface or use some other monitoring tool.

Check in cpview Top-Connections in Network tab and also CPU tab, to see how much CPU time consume each of one.

In Advanced, Network tab you can see how much traffic is processed by SLX, PXL and F2F, this should give you hint what blades are causing it.

If its IPS look at sk110737 to evaluate signatures impact. After that its all about properly tunning SecureXL and CoreXL.

Leaderboard

Epsum factorial non deposit quid pro quo hic escorol.

| User | Count |

|---|---|

| 8 | |

| 8 | |

| 4 | |

| 3 | |

| 3 | |

| 2 | |

| 2 | |

| 2 | |

| 2 | |

| 2 |

Upcoming Events

Tue 21 Apr 2026 @ 05:00 PM (IDT)

AI Security Masters E7: How CPR Broke ChatGPT's Isolation and What It Means for YouTue 28 Apr 2026 @ 06:00 PM (IDT)

Under the Hood: Securing your GenAI-enabled Web Applications with Check Point WAFTue 21 Apr 2026 @ 05:00 PM (IDT)

AI Security Masters E7: How CPR Broke ChatGPT's Isolation and What It Means for YouTue 28 Apr 2026 @ 06:00 PM (IDT)

Under the Hood: Securing your GenAI-enabled Web Applications with Check Point WAFTue 12 May 2026 @ 10:00 AM (CEST)

The Cloud Architects Series: Check Point Cloud Firewall delivered as a serviceThu 30 Apr 2026 @ 03:00 PM (PDT)

Hillsboro, OR: Securing The AI Transformation and Exposure ManagementAbout CheckMates

Learn Check Point

Advanced Learning

YOU DESERVE THE BEST SECURITY

©1994-2026 Check Point Software Technologies Ltd. All rights reserved.

Copyright

Privacy Policy

About Us

UserCenter