I wonder if experts here may be able to shed any additional light on this deeply frustrating issue.

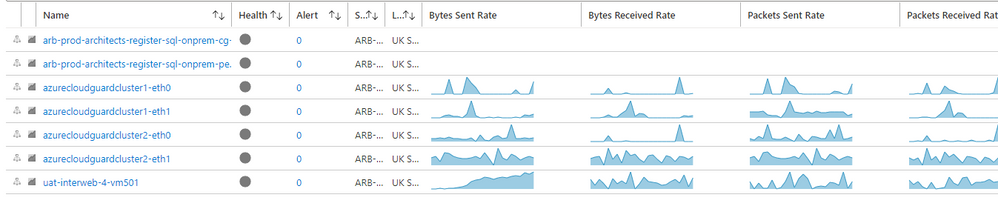

We have a Cloudguard cluster in our Azure tenancy. There is a domain VPN tunnel back to our on-premise Checkpoint cluster.

The Cloudguard cluster (R80.40) is completely up-to-date, including all Takes and patches; the on-prem cluster is R80.30 (3 pending patches.)

We have been struggling to connect an on-premise server, through the tunnel, to an Azure SQL Private Endpoint network interface. Originally, we were routing traffic all the way (no NAT in the picture) but this was getting us nowhere; so now we have tried with NAT.

In the current configuration:

An on-premise network: 192.168.50.0/24. The source host is 192.168.55.251.

The cloudguard backend network subnet is 10.10.0.0/24. The backend IP address of the Cloudguard cluster members are 10.10.0.133 and 10.10.0.134. The front-end IP of the backend LB is 10.10.0.132.

I have a NAT rule on the Cloudguard which Hides the source connection behind the address range 10.10.0.200-10.10.0.204.

To take Azure network security issues out of the equation, I have placed the SQL Private Endpoint directly into the backend network with IP address 10.10.0.135.

In the Checkpoint logs, I can see Encryption success on the on-premise cluster. And, I can see Decryption success on the Cloudguard cluster. In the same log page, I see NAT Hide working as expected: destination IP address (10.10.0.135) remains intact and source IP address has been translated from 192.168.50.251 to 10.10.0.201.

I have added ARP entries to the Cloudguard cluster (both members) as described by sk30197; for example: "add arp proxy ipv4-address 10.10.0.200 interface eth1 real-ipv4-address 10.10.0.133" etc.

Since Private Endpoint essentially eliminates Azure security as the culprit, let's assume this is not an Azure security issue. Does anybody have any idea where I should look next?

The only (untested) theory I have left of my own at this stage is that Azure Private Endpoint will not listen to packets carrying source IP addresses other than IP addresses which have been associated directly with VM virtual interfaces in Azure. (i.e. under this theory, ARP responses from a vNIC aren't enough - the IP address has to be actually configured and defined in Azure if the PE is to listen). To me, this theory seems so unlikely to prove true and I feel like I'm rather clutching at straws, but equally, I have no other ideas.

Of course, even if the above theory were correct, it would not lead to a solution, since the source addresses could only be added to either one member of the Cloudguard cluster or the other, not both; so that everything will break whenever a failover occurs in the wrong direction.

I have tried adding routing rules in the Azure backend subnet, to ensure that packets destined for any address in the Hide source range (10.10.0.200-10.10.0.204) are routed directly to the backend LB. I'm not sure these rules are needed - I suspect not - but nothing works either way. As mentioned above, a similar configuration without NAT also does not work, even with Azure routing rules in place.

We will be raising a support ticket soon with our Support Partner, but any ideas as to where I might be able to look next would be gratefully received.

Many thanks in advance.