- CheckMates

- :

- Products

- :

- CloudMates Products

- :

- Cloud Network Security

- :

- Discussion

- :

- Re: Troubleshooting Azure HA cluster failover and ...

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

Are you a member of CheckMates?

×- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Troubleshooting Azure HA cluster failover and the API call

We are deploying a new cluster for a customer and we wanted to test failover. I have tested this in a test Azure account previously and this worked.

I built another test environment today and I am showing the same symptoms as the customer.

Everything seems to deploy fine, can establish SIC with management server and install policy etc. However, if we failover, either by running clusterXL_admin down or by powering off the active gateway. A failover is triggered within Check Point, i.e., cphaprob stat on the secondary gateway shows it is now active but the cluster-vip IP is still showing in Azure on the other gateway. This has not moved across to the second gateway.

This suggests to me that either the gateway isn't triggering the API call or the API call is triggered but not actioned and I wonder how we troubleshoot this.

Was hoping to get some help from the community before going through TAC because you have to do the initial hoop jumping before you get to someone who knows cloud.

Thanks

Scott

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I'd start with running $FWDIR/scripts/azure_ha_test.py and see what it says.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

So the output I get is: -

Image version is: harry_main-294-801-GW

Reading configuration file...

Setting api versions for "ha" solution

ARM versions are: {

"resources": "?api-version=2019-07-01"

}

Error:

The hostname xxxxfw002 should be either 'xxxxfw01' or 'xxxxfw02'

[Expert@xxxxfw002:0]#

What is it comparing it to? The name in the SmartConsole or the name in Azure?

Must be Azure as I have checked SmartConsole and it has the fw002 object name matching the fw002 hostname on GAIA.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Yes it is checking the name of the VM in the azure portal.

If you deployed the ARM template and manually did some changes to the hostname you're in for some fun changes in the azure_ha_test.py and azure_had.py script on the gateways

This is part of the script where it (hardcoded) looks for cluster_name+1 as the name of the first member

if conf['hostname'] not in {cluster_name + '1', cluster_name + '2'}:

Please also check

It explains manual testing without executing the failover

And the important part about the naming convention (because of the hardcoded scripts):

Naming Constraints

Do not change the name of any resources.

Cluster Members VM names must match the Cluster name with a suffix of '1' and '2'.

Network Interface names must match the Cluster Member VM names with a suffix of '-eth0' and '-eth1'.

The IP address of the cluster has to match the configuration file.

By default it should match the cluster name.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi PB, Is this script ok to run in prod? Doesn't stop start services/ change state etc?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I don't believe it does anything harmful.

It might be worth double checking with TAC, though.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Have you got any solution for this issue?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi Serv,

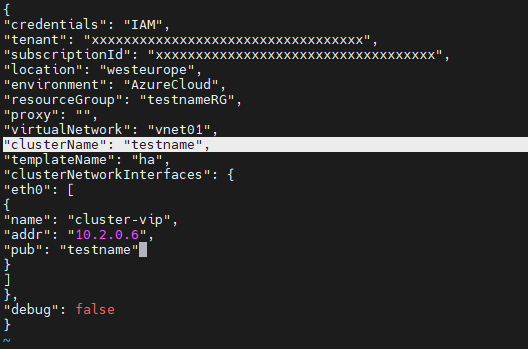

If you are facing the same issue, it might be possible your "clusterName" value in azure-ha.json doesn't match the VM names.

You can find this file under $FWDIR/conf/azure-ha.json

As mentioned in earlier posts the value here must match the name of the VM in Azure.

For example, if you VM names are Azure is "testname1" and "testname2", the value under "clusterName" should be "testname"

Hope this helps,

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hello Edan_Leventhal,

Thanks for your suggestions.

We already have the correct name in place still the VIP is not shifting to secondary gateway interface after failover.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi @Serv

First, I would recommend taking a look at the HA logs located in $FWDIR/log/azure_had.elg. Check if there are any errors present that could provide insight into the issue.

Additionally, when you executed the azure_ha_test.py, did it complete successfully, or did you encounter the same error message: 'The hostname xxxxfw002 should be either 'xxxxfw01' or 'xxxxfw02''?

There's a possibility that the alterations you've implemented to the azure-ha.json might not have loaded properly. To address this, you could attempt to kill the HA process "kill -9 $(cpwd_admin getpid -name AZURE_HAD)".

Alternatively, you can execute the command "$FWDIR/scripts/azure_ha_cli.py reconf" to ensure the configuration gets properly loaded.