- CheckMates

- :

- Products

- :

- CloudMates Products

- :

- Cloud Network Security

- :

- Discussion

- :

- Checkpoint AWS Egress connectivity

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

Are you a member of CheckMates?

×- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Checkpoint AWS Egress connectivity

I am working on a POC where

I have two Virtual Private Cloud (VPCs) on AWS where I am trying to send an egress internet traffic from Servers -> Squid Proxy -> Checkpoint -> NAT gateway

in which servers, checkpoint and NAT gateway is in VPC1 and Squid proxy is in VPC2

I have done the VPC peering but it seems traffic is getting blocked Checkpoint.

I understand that there are a lot of hops for egress traffic but can't move the components.

I don't know if I am missing something in checkpoint configuration

- Tags:

- aws

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

If the traffic is getting blocked at the Check Point device, there should be some evidence of that.

What do you see in the logs?

What does your policy look like to allow the traffic?

Can you verify (e.g. with tcpdump) the traffic is even reaching the Check Point device?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

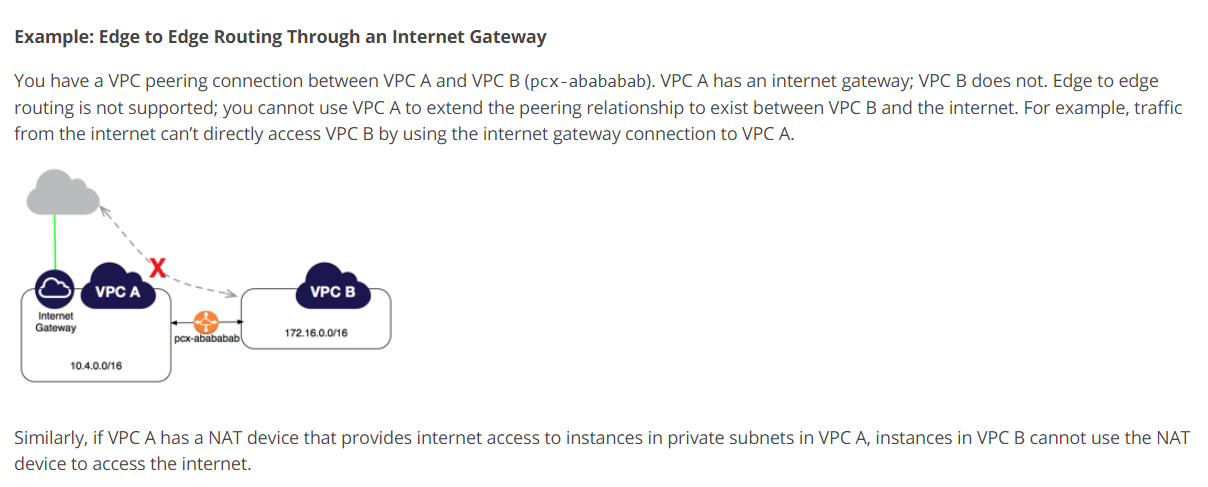

I believe that you are attempting a configuration unsupported by AWS:

Unsupported VPC Peering Configurations - Amazon Virtual Private Cloud

If you are interested in proxy connectivity via peer VPC, place the proxy in the VPC1 and define it in your hosts' proxy configs.

You can see the example of me using CheckPoint gateway as a proxy in a peered VPC here:

vSEC deployment scenarios in AWS

I.e.:

You do not have to use Check Point as a proxy though, but the idea is the same: place it in the VPC that is connected to the internet and pipe its egress traffic through Check Point.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi Vladimir,

Thanks for response, I believe, as checkpoint is network device so it should be able to route the traffic to internet even if traffic is coming from different vpc (similar to transit VPC).

In my scenario application servers and checkpoint servers are in VPC1 and proxy server in VPC2 ( I understand it's weirdo ) but we were hoping that traffic will flow from App servers(vpc1) to proxy(vpc2) to checkpoint (VPC1) server who will be able to route it to internet (in a kind of zig zag manner)

Regards

Rohit

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi Rohit,

I have never done a transit VPC with a Check Point but I have with a CSR 1000v. I had the same issues (not routing) and thought process (its a network device, all I need to do is get the traffic there and it will route properly). The only way I was able to accomplish this was to create a VPN between a CSR in VPC1 and a CSR in VPC2, then route the traffic through the tunnel. Although this was not optimal for me, it had to be done before my vendor supported direct BGP peering from my on-prem ASR to the VGW in their VPC.

This was costly and, as stated before, not optimal. Ill add to Vladimir's question, why the proxy in a completely separate VPC?

- Mike

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Mike,

You are absolutely correct: the only way to achieve the desired behavior is to have traffic between VPCs in a VPN tunnel.