- Products

- Learn

- Local User Groups

- Partners

- More

This website uses Cookies. Click Accept to agree to our website's cookie use as described in our Privacy Policy. Click Preferences to customize your cookie settings.

- Products

- Learn

- Local User Groups

- Upcoming Events

- Americas

- EMEA

- Czech Republic and Slovakia

- Denmark

- Netherlands

- Germany

- Sweden

- United Kingdom and Ireland

- France

- Spain

- Norway

- Ukraine

- Baltics and Finland

- Greece

- Portugal

- Austria

- Kazakhstan and CIS

- Switzerland

- Romania

- Turkey

- Belarus

- Belgium & Luxembourg

- Russia

- Poland

- Georgia

- DACH - Germany, Austria and Switzerland

- Iberia

- Africa

- Adriatics Region

- Eastern Africa

- Israel

- Nordics

- Middle East and Africa

- Balkans

- Italy

- Bulgaria

- Cyprus

- APAC

- Partners

- More

- ABOUT CHECKMATES & FAQ

- Sign In

- Leaderboard

- Events

Step Into the Future of

AI-Powered Cyber Security

When the Agents Attack

A Live Look at Agentic Exposure Validation

Bridge the CAASM Gap

with Exposure Management

AI Security Masters E8:

Claude Mythos: New Era in Cyber Security

CheckMates Go:

CheckMates Fest

Turn on suggestions

Auto-suggest helps you quickly narrow down your search results by suggesting possible matches as you type.

Showing results for

- CheckMates

- :

- Products

- :

- Hybrid Mesh

- :

- Firewall and Security Management

- :

- Log Exporter guide

Options

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

Turn on suggestions

Auto-suggest helps you quickly narrow down your search results by suggesting possible matches as you type.

Showing results for

Are you a member of CheckMates?

×

Sign in with your Check Point UserCenter/PartnerMap account to access more great content and get a chance to win some Apple AirPods! If you don't have an account, create one now for free!

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Log Exporter guide

Hello All,

We have recently released the Log Exporter solution.

A few posts have already gone up and the full documentation can be found at sk122323.

However, I've received a few questions both on and offline and decided to create a sort of log exporter guide.

But before I begin I’d like to point out that I’m not a Checkpoint spokesperson, nor is this an official checkpoint thread.

I was part of the Log Exporter team and am creating this post as a public service.

I’ll try to only focus on the current release, and please remember anything I might say regarding future releases is not binding or guaranteed.

Partly because I’m not the one who makes those decisions, and partly because priorities will shift based on customer feedback, resource limitations and a dozen other factors. The current plans and the current roadmap is likely to drastically change over time.

And just for the fun of it, I’ll mostly use the question-answer format in this post (simply because I like it and it’s convenient).

Log Exporter – what is it?

Performance

Filters

Filters: Example 1

Filters: Example 2

Gosh darn it, I forgot something! (I'll edit and fill this in later)

Feature request

Labels

- Labels:

-

Documentation

-

Integrations

-

Logging

146 Replies

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Log Exporter – what is it?

So... what is it?

Check Point "Log Exporter" is an easy and secure method for exporting Check Point logs in few standard protocols and formats.

Which protocols do you support?

UDP and TCP.

We also support secure connections (TLS) using mutual authentication.

What formats do you support?

At this time we support CEF, LEEF, Syslog and also a “generic” format.

Why isn’t field X covered in your CEF mapping?

We developed our CEF mapping (choosing which checkpoint field is mapped to which CEF variable) in collaboration with Micro Focus (the owners of ArcSight), and it represents what we believe to be the best overall coverage of all the major fields from across our blades. However, since there are only a set number of available CEF variable we had to pick and choose which fields we wished to map.

If you feel a specific field should be given higher priority, or if you don’t use some blades and would rather remap those variable to other fields you can simply create a user-defined mapping file that reflects your own preferences.

What’s the deal with LEEF – is it support or not?

Yes… with some caveats.

We are not yet fully LEEF compliant in that the timestamp is sent in epoch (which is not supported by LEEF). We do however have an ongoing collaboration with IBM and they plan to update LEEF to support epoch format as well.

Once they do that we will be LEEF compliant.

Unfortunately, I don’t have access to any of their timetables and don’t know when they are actually going to do this.

I use Splunk, and I didn’t notice CIM in the listed formats – what gives?

We plan to release a Splunk application which will support CIM in a future Log Exporter release.

In the meantime, the Generic format will give good field extraction results.

I can’t find the policy name field. Where is it?

The policy name field doesn’t actually exist as a unique field. It is part of the __policy_id_tag which is not really all that readable. In this release, we are filtering out this field by default, and plan to address this in a future release.

(if you really need this field you can remove the filter from the mapping file, bear in mind you’ll have to somehow parse the field to extract the relevant information.)

What about the domain name field?

Same answer as above.

In my SmartLog each log has a type such as log, alert, control etc.

Where can I find this information?

This information is contained in the flags fields. Unfortunately, it’s not human readable (just a bunch of bits). For now, we are filtering this field out by default and plan to address this in a future release.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Performance

What is the CPU usage of the Log Exporter?

What is the memory consumption?

How many logs/second can I export?

Yeah, those are really good questions.

Unfortunately, I don’t have any official answers.

I can give some anecdotal answers and specific examples or comments I’ve heard from customers.

If you tested this in your environment I encourage you to add your results/comments below.

On a Smart-1 405 running R80.10 with CEF format over TCP, we saw ~18.2K logs/sec with a CPU usage of ~115% (1.15 out of 4 cores).

On a different environment running on a Smart-1 410 with CEF format over TLS we saw ~21.5K logs/sec with a CPU usage of ~115%

Another customer who compared the new solution to CPLogToSyslog stated that the new solution used fewer resources – but didn’t go into specifics.

Again – these are anecdotal examples and not official numbers.

Our focus in this release was to make sure the log exporter was not the bottleneck – to make sure it can outperform the indexer.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hello Yonatan

I have configured log exporter and see below continuous messages in log_indexer.elg file. at the same time, we are able to see logs on syslog server. But i am wondering why we are getting these messages? any leads?

LogFormatExtractor::extractFields Filter List is empty

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hey @Raman_Arora

How are you?

This message was removed from the new versions of Log Exporter.

It has no meaning for you - just for debugging 🙂

Shay

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hello Shay,

I am doing well. hope same with you. I have like 50 log exporter instances running.. and i see these messages only in one of them. there is no other message, so was concerned a bit.

Any way to get rid of these messages?

Raman

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hey Raman,

Unfortunately, the only way to get rid of it is to install a new jumbo version that does not contain this message by default.

If it is possible, can you please share your version + current jumbo version so I will be able to let you know what jumbo version does not contain it?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

we are on R80.20 and Take 161 installed on it.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hey @Raman_Arora

It seems that this error message is turned of on this specific take of jumbo.

Did you run "cp_log_export reconf" after installing this jumbo?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

i have recreated the instance. does it still required to run cp_log_export reconf?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

In this case no reconf is needed.

In order to keep investigating it, I would suggest you to open a SR so one of our developers will be able to investigate it and give you a solution as fast as possible.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Filters

- First of you need to know that Checkpoint logs are arranged in a key:value format.

source:1.2.3.4 action:drop [key]:[value]

I’ll use this information in some of my answers.

My SIEM charges me based on storage/throughput – how can I reduce the number of logs I’m sending them?

We know that the access logs (VPN-1 & Firewall-1) comprise the bulk of the logs for most customers. And in many cases, those are not really the interesting logs.

We added the option to filter out those logs. In the targetconfiguration.xml file you can find the filter_out_by_connection parameter. If you set this to true, your access log will not be sent.

That’s great! But I don’t really need the Application Control logs either. I only want the IPS logs to be sent. How can I do that?

Unfortunately, you can’t do that in this release.

But I can see the different blades which are filtered out! What happens if I just add the Application Control blade there? Won’t that work?

It probably will. But this isn’t recommended or supported. It wasn’t tested and could have unexpected results.

This is something we plan to address in future releases, but for now, it’s not supported.

The only thing that really interests me is why my users are blocked. Can I send out just the drop logs?

Unfortunately, in this release, we don’t support value based filters. We can only filter based on the field (key).

Again we plan to address value based filters in a future release.

So what type of filters can you do?

I’m glad you asked! Let’s look at some examples.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Filters: Example 1

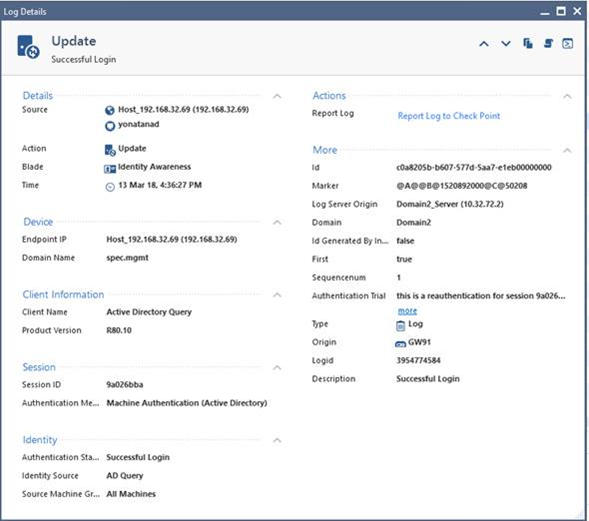

The customer wants to get identity awareness logs. He need to save a record going back at least one year, however, he has a storage problem.

We start by looking at a sample IDA log.

Let’s analyze the raw data:

<134>1 2018-03-21 17:25:25 MDS-72 CheckPoint 13752 - [action:"Update"; flags:"150784"; ifdir:"inbound"; logid:"160571424"; loguid:"{0x5ab27965,0x0,0x5b20a8c0,0x7d5707b6}"; origin:"192.168.32.91"; originsicname:"CN=GW91,O=Domain2_Server..cuggd3"; sequencenum:"1"; time:"1521645925"; version:"5"; auth_method:"Machine Authentication (Active Directory)"; auth_status:"Successful Login"; authentication_trial:"this is a reauthentication for session 9a026bba"; client_name:"Active Directory Query"; client_version:"R80.10"; domain_name:"spec.mgmt"; endpoint_ip:"192.168.32.69"; identity_src:"AD Query"; identity_type:"machine"; product:"Identity Awareness"; snid:"9a026bba"; src:"192.168.32.69"; src_machine_group:"All Machines"; src_machine_name:"yonatanad";]

It contains a lot of relevant information, but some of those fields are probably not really relevant. Either because they contain information which is always static in my organization or information which is not IDA related.

If I wanted to boil it down into the relevant information I’d probably end up with something closer to this:

action:"Update"; origin:"192.168.32.91"; time:"1521645925"; auth_status:"Successful Login"; domain_name:"spec.mgmt"; identity_src:"AD Query"; identity_type:"machine"; snid:"9a026bba"; src:"192.168.32.69"; src_machine_group:"All Machines"; src_machine_name:"yonatanad";

Went down from 755 bits to around 323 bits, which is a reduction of ~60% in the log size.

So I map out the relevant fields:

action, origin, time, auth_status, domain_name, identity_src, identity_type, snid, src, src_machine_group, src_machine_name.

I then create a user-defined mapping file with those fields and set the exportAllFields parameter to false. Now only fields which appear in my mapping file will be sent (a sort of whitelist approach).

However this isn’t enough, as it will mean that I’ll get many logs from other blades which will be mostly empty - only containing those fields which exist in almost all logs such as action, origin, src, etc.

So if I want to make sure I only get Identity Awareness logs I’ll pick one (or more) of the fields which are unique to the Identity Awareness blade, such as the identity_src or identity_type fields and give those fields the ‘required’ attribute in the mapping file.

Now only logs which contain this field will be sent.

The end result is that the customer has reduced his output to only IDA logs which only contain the bare minimum of what he actually needs.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

How do you create a mapping file. Can you share format of the same. When I set exportALLfields parameter to false I don't get any log.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi @Sony_James

Can you please tell me what is your goal by creating a mapping file?

Basically, mapping file gives you the ability to change the field name / field value and prevent from a specific field to be exporter.

When you configure Log Exporter, each format has its own mapping file out of the box and this file is used when your exporter is running.

I would like to understand what you want to achieve so I will be able to suggest and give you the correct solution.

Shay

Can you please tell me what is your goal by creating a mapping file?

Basically, mapping file gives you the ability to change the field name / field value and prevent from a specific field to be exporter.

When you configure Log Exporter, each format has its own mapping file out of the box and this file is used when your exporter is running.

I would like to understand what you want to achieve so I will be able to suggest and give you the correct solution.

Shay

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Filters: Example 2

The customer has a very large deployment and has hundreds of GB of logs per day.

His vendor charges him per bit sent, and the customer is looking to reduce his footprint anyway he can.

He needs all his logs, so filtering out logs is not an option. Instead, he’s looking to reduce the size of each log sent.

The whitelist approach won’t work this time as there are simply too many different fields. Instead, the customer is trying to identify fields which don’t contain relevant information and filter them out (a sort of blacklist approach).

Let’s start with a random sample log.

<134>1 2018-03-21 18:09:26 MDS-72 CheckPoint 13752 - [action:"Accept"; conn_direction:"Outgoing"; flags:"6307840"; ifdir:"inbound"; ifname:"eth1"; logid:"320"; loguid:"{0x5a9fba17,0x0,0x5b20a8c0,0x149c}"; origin:"192.168.32.91"; originsicname:"CN=GW91,O=Domain2_Server..cuggd3"; sequencenum:"1"; time:"1521648566"; version:"5"; __policy_id_tag:"product=VPN-1 & FireWall-1[db_tag={BACD59B6-0BBC-A544-A5F9-A136152F0B37};mgmt=Domain2_Server;date=1520501096;policy_name=Standard\]"; aggregated_log_count:"242539"; bytes:"17427"; client_inbound_bytes:"5647"; client_inbound_packets:"65"; client_outbound_bytes:"11780"; client_outbound_packets:"62"; connection_count:"121149"; creation_time:"1520417303"; dst:"111.11.11.21"; duration:"1231263"; hll_key:"6523019790322755370"; inzone:"Internal"; last_hit_time:"1521648533"; layer_name:"Network"; layer_name:"Application"; layer_uuid:"d2787740-4872-4342-a0c1-58470e2d9bef"; layer_uuid:"cdeb4bd1-f11f-4d36-a78f-03cfa317d06d"; match_id:"1"; match_id:"16777219"; parent_rule:"0"; parent_rule:"0"; rule_action:"Accept"; rule_action:"Accept"; rule_name:"Network_Rule_One"; rule_name:"Appi_Cleanup rule"; rule_uid:"51419c04-5fc4-4263-8cca-e5d14f2dcf56"; rule_uid:"5440fb90-dd92-4e6a-8191-8957c279f3a9"; outzone:"External"; packets:"127"; product:"VPN-1 & FireWall-1"; proto:"17"; protocol:"DNS-UDP"; server_inbound_bytes:"11780"; server_inbound_packets:"62"; server_outbound_bytes:"5647"; server_outbound_packets:"65"; service:"53"; service_id:"domain-udp"; sig_id:"12"; src:"10.32.91.190"; update_count:"2054"; ]

We are starting out at 1549 bits.

Right off the bat, we need to decide if we need the header.

No? Let’s remove it for 55bits (per log).

Next, I notice that the default field separator is semi-colon+space. I can reduce this to just the semi-colon for an extra 53bits.

Now we try to identify fields which don’t interest me. Obviously, this is customer specific but I’d say that these fields can probably safely be removed in most cases:

flags, originsicname, sequencenum, version, __policy_id_tag, layer_uuid, server_inbound_bytes, server_inbound_packets, server_outbound_bytes, server_outbound_packets ( those are duplicates of client_outbound which already exists)

I could be much more aggressive with what I cut out but this is a good start.

Down to 982bits.

Now the next step is something that’s a bit more extreme but is from an actual use case where it was done.

There is no point in sending out client_inbound_packets:"65" which has a large key and small value when I can just as easily send out F11:”65”.

I can create a mapping file on the receiving end (assuming the SIEM supports this) which knows to translate F11 back into the relevant key field.

So I create the relevant mapping file where fields which I want to cut get the ‘<exported>false</exported>’ property, and the rest of the fields will be mapped to relevant alpha-numeric codes.

We end up with:

F1:"Accept";F2:"Outgoing";F3:"inbound";F4:"eth1";F5:"320";F6:"{0x5a9fba17,0x0,0x5b20a8c0,0x149c}";F7:"192.168.32.91";F8:"1521648566";F9:"242539";F10:"17427";F11:"5647";F12:"65";F13:"11780";F14:"62";F15:"121149";F16:"1520417303";F17:"111.11.11.21";F18:"1231263";F19:"6523019790322755370";F20:"Internal";F21:"1521648533";F22:"Network";F22:"Application";F23:"1";F23:"16777219";F24:"0";F24:"0";F25:"Accept";F25:"Accept";F26:"Network_Rule_One";F26:"Appi_Cleanup rule";F27:"51419c04-5fc4-4263-8cca-e5d14f2dcf56";F27:"5440fb90-dd92-4e6a-8191-8957c279f3a9";F28:"External";F29:"127";F30:"VPN-1 & FireWall-1";F31:"17";F32:"DNS-UDP";F33:"53";F34:"domain-udp";F35:"12";F36:"10.32.91.190";F37:"2054";

687bits. We started with 1549bits so a reduction of ~55%

And I can get better results if I’m willing to be fairly aggressive with my field filters.

Edit: As was correctly pointed out, the unit of measurement is not actually bits, but bytes (number of characters as given by a text editor word count).

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Maybe i missed it, but is there any place that a sample of what the mapping configuration file should look like. I don't see it detailed in this post or the SK article.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hello Aaron,

There are three relevant files.

The targetConfiguration.xml contain the 'system configuration' such as port, protocol etc. and can also be changed by using the command line flags.

This files also points to the definition and mapping files with the <mappingConfiguration> & <formatHeaderFile> parameters (in case you want to use custom settings).

The definition files (the default files are located in the conf sub-directory) sets the header and logs format - delimiters, operators etc.

The mapping files will describe the field mapping (renaming fields from X to Y) and filtering options.

There are several mapping and definition files included by default (for syslog, CEF, etc.) and you can find them in the target folder.

We also included a 'demo' mapping file called fieldsMapping.xml which has several examples of how to use some of the options.

The different options for each file are described in the SK.

Hope this helped clarify the issue.

Regards,

Yonatan

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

how can i ensure that the "Audit logs" are also sent. is it sent by default.

Audit logs as in creating of new rule or deletion of a rule, creating objects etc.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hello Biju,

The audit logs are sent by default.

In the targetconfiguration.xml setting file, there is a parameter called log_types.

The line looks like this:

<log_types></log_types><!--all[default]|log|audit/-->

The default is for both logs and audit logs to be sent, but you can change this to only send one or the other.

HTH

Yonatan

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Yes I saw this and did not change any default settings. Even though the setting are set to default, in my scenario the audit logs are not reaching the syslog server but the access control logs are appearing on the syslog server. I did a wire shark capture in syslog server, but didn’t find any adt logs.

Is there a way to find out if the audit logs are being sent out of the MDS.

Regards,

Biju Nair

Sent from my iPhone

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hello Biju,

Edit - was originally part of another answer. I moved it here as it was more relevant to your question.

With regards to audit logs, there could be two 'gotcha' to look out for. if you're using a log server, some of your audit logs will probably be generated on your management server. You need to make sure that you have an exporter deployed on the server which holds the audit log.

For an MDS server some of your audit logs will probably be generated on the MDS level - so you should make sure that you have an exporter deployed on your MDS level.

HTH

Yonatan

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi Biju,

Did you find out the issue why the audit logs were not forwarded from Checkpoint? I am also facing the same issue. Please help.

Regards,

Mitesh Agrawal

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Some actual examples would go a long way, such as including the files/changes made for your filter examples.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I installed Log Exporter hotfix on a management server in my lab running R80.10 JHF 112. I used CPUSE to download and install the Log Exporter hotfix. Refer to sk122323 Installation section for more details.

Next you need to create your targets with the cp_log_export command. Refer to sk122323 Basic deployment section for more details.

If you look in $EXPORTERDIR on the management server you will find a sub-directory for each target you created with cp_log_export. Inside of the target sub-directory will be two files you will be using for filters.

targetConfiguration.xml

line 2 from the snippet below - <mappingConfiguration> specifies the file that contains all the field definitions for the filter/mapping, can be any filename in that target sub-directory, I used fm-browsing.xml for this example.

line 4 - <exportAllFields> start with true which sends every entry and ignores your <mappingConfiguration> file. Use the information from those entries to determine which fields you care about then define those in your filter/mapping file specified in <mappingConfiguration>. Once you are ready to test your filter/mapping, toggle <exportAllFields> to false and restart log export with "cp_log_export restart name <name of target>"

<resolver>

<mappingConfiguration>fm-browsing.xml</mappingConfiguration><!--if empty the fields are sent as is

without renaming-->

<exportAllFields>true</exportAllFields> <!--in case exportAllFields=true - exported element in fiel

dsMapping.xml is ignored and fields not from fieldsMapping.xml are exported as notMappedField field-->

</resolver>

fm-browsing.xml

Information for format of this file is defined sk122323 Advanced configuration post-deployment section in a table labeled "Field Mapping Configuration XML"

Example of the long XML format (more whitespace)

<?xml version="1.0" encoding="utf-8"?>

<fields>

<field>

<origName>user_agent</origName>

<exported>true</exported>

<dstName>newer_agent</dstName>

<required>true</required>

</field>

</fields>

Example of a more condensed XML format (functions the exact same as above, just different visually and easier to cut and paste IMO)

<?xml version="1.0" encoding="utf-8"?>

<fields>

<field><origName>time</origName><exported>true</exported><required>true</required></field>

<field><origName>product</origName><exported>false</exported><required>true</required></field>

<field><origName>origin</origName><exported>true</exported><required>true</required></field>

<field><origName>originsicname</origName><exported>false</exported><required>true</required></field>

<field><origName>proto</origName><exported>true</exported><required>true</required></field>

<field><origName>action</origName><exported>true</exported><required>true</required></field>

<field><origName>src</origName><exported>true</exported><required>false</required></field>

<field><origName>src_user_dn</origName><exported>true</exported><required>false</required></field>

<field><origName>dst</origName><exported>true</exported><required>false</required></field>

<field><origName>client_type_os</origName><exported>true</exported><required>false</required></field>

<field><origName>service_id</origName><exported>true</exported><required>false</required></field>

<field><origName>user_agent</origName><exported>true</exported><required>false</required></field>

<field><origName>app_category</origName><exported>true</exported><required>false</required></field>

<field><origName>app_desc</origName><exported>true</exported><required>false</required></field>

<field><origName>appi_name</origName><exported>true</exported><required>false</required></field>

<field><origName>matched_category</origName><exported>true</exported><required>false</required></field>

<field><origName>protocol</origName><exported>true</exported><required>false</required></field>

<field><origName>method</origName><exported>true</exported><required>false</required></field>

<field><origName>resource</origName><exported>true</exported><required>false</required></field>

<field><origName>src_machine_name</origName><exported>true</exported><required>false</required></field>

<field><origName>src_user_name</origName><exported>true</exported><required>false</required></field>

<field><origName>user</origName><exported>true</exported><required>false</required></field>

<field><origName>web_client_type</origName><exported>true</exported><required>false</required></field>

</fields>

Information for format of this file is defined sk122323 Advanced configuration post-deployment section in a table labeled "Field Mapping Configuration XML"

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Perfect, thanks Wes!

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Thanks, Wes! I know this is an old post, but it was super helpful to get things up and running on R80.30. I do have one question if anyone is still listening. For some reason, only one of my gateways is exporting the "rule_name" and none of them are exporting the "policy_name". Has anyone experienced this problem?

Thanks,

Mike

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi,

I have an issue with the "layer_uuid" field, I can not seem to remove it from logs. I have this is my mapping config file:

<field><origName>layer_uuid</origName><exported>false</exported></field>

However, the field still shows up in the logs. I have removed other fields as well, these do not show up in the logs, so the configuration should be correct.

Regards

Morten

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hello Morten,

I think this might be related to the use of tables.

This is addressed in the sk, but can still often lead to misunderstandings.

From the SK:

| <table> | Some fields will appear in tables depending on the log format. This information can be found in the elg log - one entry for every new field. A field can appear in multiple tables, each distinct instance is considered as a new field. |

|

In R80+ we introduced the concepts of tables in the logs. When you wish to work with/on a field you must specify it's exact location if it's in a table.

To find if a field is in a table you can search for that field in the elg file. For example:

[log_indexer 13874 4076104592]@MDS-72[12 Aug 9:48:22] Read Log Format field name:['layer_uuid']. Field from table:[match_table].

I can see that the 'layer_uuid' is in the 'match_table' table. So to manipulate that field (either change its name or whether or not to export it) I would use something like this:

<table><tableName>match_table</tableName>

<fields>

<field><origName>layer_uuid</origName><exported>false</exported></field>

</fields>

</table>

You can also see an example of this in the example mapping file fieldsMapping.xml.

HTH

Yonatan

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

That worked.

Thanks!

Log Exporter guide

Hello All,

We have recently released the Log Exporter solution.

A few posts have already gone up and the full documentation can be found at sk122323.

However, I've received a few questions both on and offline and decided to create a sort of log exporter guide.

But before I begin I’d like to point out that I’m not a Checkpoint spokesperson, nor is this an official checkpoint thread.

I was part of the Log Exporter team and am creating this post as a public service.

I’ll try to only focus on the current release, and please remember anything I might say regarding future releases is not binding or guaranteed.

Partly because I’m not the one who makes those decisions, and partly because priorities will shift based on customer feedback, resource limitations and a dozen other factors. The current plans and the current roadmap is likely to drastically change over time.

And just for the fun of it, I’ll mostly use the question-answer format in this post (simply because I like it and it’s convenient).

Log Exporter – what is it?

Performance

Filters

Filters: Example 1

Filters: Example 2

Gosh darn it, I forgot something! (I'll edit and fill this in lat

...Leaderboard

Epsum factorial non deposit quid pro quo hic escorol.

| User | Count |

|---|---|

| 29 | |

| 15 | |

| 6 | |

| 6 | |

| 5 | |

| 5 | |

| 5 | |

| 4 | |

| 4 | |

| 3 |

Upcoming Events

Thu 11 Jun 2026 @ 11:00 AM (EDT)

Tips and Tricks 2026 #8: Say Yes to AI Without Saying Yes to RiskFri 12 Jun 2026 @ 10:00 AM (CEST)

CheckMates Live Netherlands - Sessie 47: Continuous Threat Exposure ManagementTue 16 Jun 2026 @ 05:00 PM (CEST)

Under the Hood: Check Point SASE | Internet Access Optimization & Performance TuningThu 11 Jun 2026 @ 11:00 AM (EDT)

Tips and Tricks 2026 #8: Say Yes to AI Without Saying Yes to RiskFri 12 Jun 2026 @ 10:00 AM (CEST)

CheckMates Live Netherlands - Sessie 47: Continuous Threat Exposure ManagementTue 16 Jun 2026 @ 05:00 PM (CEST)

Under the Hood: Check Point SASE | Internet Access Optimization & Performance TuningThu 18 Jun 2026 @ 10:00 AM (CEST)

The Cloud Architects Series: Check Point WAF - The Next Generation of AI powered protectionTue 23 Jun 2026 @ 05:00 PM (CEST)

Under the Hood: Check Point Cloud Firewall | Securing all of your clouds: Art of the possibleAbout CheckMates

Learn Check Point

Advanced Learning

YOU DESERVE THE BEST SECURITY

©1994-2026 Check Point Software Technologies Ltd. All rights reserved.

Copyright

Privacy Policy

About Us

UserCenter