- CheckMates

- :

- Products

- :

- CloudMates Products

- :

- Cloud Network Security

- :

- Discussion

- :

- Not web-based, not proxied traffic through an Auto...

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

Are you a member of CheckMates?

×- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Not web-based, not proxied traffic through an Autoscaling Group

As you may know, the normal, documented deployment of an autoscaling group (in AWS and elsewhere) is always sandwiched between loadbalancers.

For egress traffic, the documented way is that of using an internal loadbalancer to act as a front end proxy. The ELB (which is deployed as part of the cloud formation template) is configured to load-balance on the ASG. The ASG is configured to act as http/https proxy. Customers then have to have their web-clients (browsers, wget, curl, WindowsUpdate, etc) point to that ELB as their proxy, and then that ELB will forward the traffic to the right instance in the vSEC ASG.

The challenge is that not infrequently some egress traffic is not web based, or sometime it's operationally challenging to enforce proxy settings. In such cases it would be beneficial if we could use more conventional, routing based approach to make sure that outbound traffic is inspected by some member of the ASG.

There are 2 approaches one can take here: First is that of "reserving" some instances of the ASG, so that they won't be "scaled in" , and then manually setting routes against these specific instances, making them the default gateway for internet bound traffic. While this works, it does not provide any measure of resiliency or high availability.

The second approach is what this post is about. Below you'll find a link to a PoC of a lambda function, plus some instructions on how to set the trigger for this function. When deployed properly, the lambda function will listen to notifications and alerts about the ASG and in response, it will automatically maintain an optimized mapping of route tables to active gateways. This allows a hand-free use of members of the ASG as default gateways and will thus allow the protection by the ASG of any type of outbound traffic, and not only proxied web based. As currently implemented, whenever a member of the ASG becomes unavailable or "OutofService" according to the ELB, the code will find all the route tables that used this GW and will move them to another, healthy, gateway.

The PoC code and deployment instructions are available here.

Note1: that this approach could be combined with other lambda-based solutions to maintaining synchronization between route53 and ASG, so as to allow also non-TCP ingress traffic to flow through the ASG.

Note2: this code was not extensively tested. I'd recommend some additional tests if you want to use it in production.

- Tags:

- autoscaling

- aws

- lambda

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Awesome post

Very useful

thx 🙂

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Given this limitation of CP ASGs, (as it pertains to the egress traffic):

"At the moment, the original client's IP address can only be logged and cannot be used to enforce access rules in the security policy."

I may have to use HA clusters in each AZ in order to achieve normal outbound traffic inspection.

On another note, if you are inspecting outbound HTTPS traffic, how do you handle certificate distribution to ASG members, (sorry, I did not read the code, but am curious)?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

About original client's IP addresses. Of course, if you don't use an ELB to front-end the proxy and instead use the method described in this post, you will see and will be able to enforce outbound rules based on the clients' real (private) IP addresses.

About distributing certificates I'll have to go back to my notes but the SK for autoscaling in aws (sk112575) covers this as well

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Jonathan,

Can you tell me if this method circumvents the AWS limitation of trans-VPC egress routing?

I see its value for same VPC based ASGs, but one of the scenarios I am working on involves getting multiple VPCs accessing Internet resources securely, while not having local IGWs.

For now, the best I could think of is proxying the traffic through the peered VPC containing Check Point clusters.

Thank you.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

If you do NOT use the lambda function above and instead use the original documented way of protecting outbound traffic, then, indeed, the ELB that front-ends the proxy, as well as the ASG itself, can live in a different VPC that's simply peered with the VPCs where the outbound traffic originates. But remember that you then also need to configure all the web-clients on these instances that you want to secure, to use that ELB as their web proxy.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

May be I was not clearly stating the question:

If I am using the method you have described, how would it allow me to implement the trans-vpc egress?

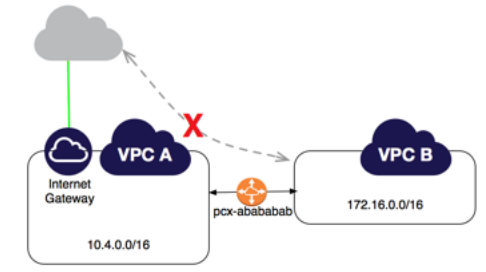

I was under impression that AWS simply prohibiting return packets to exit the IGW equipped VPC regardless of routes setup in peered VPCs.

I.e. VPC1 has IGW and vSEC (single or ASG) and is peered with VPC2.

VPC1 has a route to the Peered VPC2 CIDR and subnets

VPC2 has a route 0.0.0.0/0 pointing to its peer.

Traffic successfully traverses peered connection, enters and exits the vSEC on its way to the Internet.

Return traffic is discarded by AWS as soon as determination is made that it being destined to hosts in peered VPC.

As per AWS Peering Documentation:

Example: Edge to Edge Routing Through an Internet Gateway You have a VPC peering connection between VPC A and VPC B (pcx-abababab). VPC A has an internet gateway; VPC B does not. Edge to edge routing is not supported; you cannot use VPC A to extend the peering relationship to exist between VPC B and the internet. For example, traffic from the internet can’t directly access VPC B by using the internet gateway connection to VPC A.

Similarly, if VPC A has a NAT device that provides internet access to instances in private subnets in VPC A, instances in VPC B cannot use the NAT device to access the internet.

Given these limitations, I cannot imagine that simply having proper automated routing will help.

The only alternative to my original design is to setup the VPN between peered VPCs that will be traversing peering connection and then use vSEC to inspect and route the traffic to and from the Internet.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi, as you stated, in AWS you can't route through a peering connection. The lambda function that I shared above is attempting to maintain route tables for protected subnets that point to (ie, where the target of their routes is) some active member on the ASG. So the ASG has to live in the same VPC as the subnets it protects for this to work.

Yes, you can have a "transit vpc" design with a VPN tunnel between spoke VPCs and a hub VPC that has a Check Point gateway, or cluster, or even a pair of these, in different AZs. We should have more documentation on how to set it up in the near future. Note though that currently ASGs do not support VPN. So this is completely independent from the topic of the original post 🙂

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Thank you for clarifying.

When you stating that ASGs do not support VPN, you are referring to CP ASGs only, correct?