- CheckMates

- :

- Products

- :

- CloudMates Products

- :

- Cloud Network Security

- :

- Discussion

- :

- NTP clock sync not working on cloudguard R80.10 HA

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

Are you a member of CheckMates?

×- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

NTP clock sync not working on cloudguard R80.10 HA

Hi All...

I have successfully deployed Checkpoint cloudguard HA cluster running R80.10 using Check Point CloudGuard IaaS High Availability for Microsoft Azure R80.10 and above Deployment Guide ..

Internet ---- eth0 (172.19.16.20(FW2 21)/28) FW1 eth1 (172.19.16.37(FW2 38)/28) -------Inside (towards on-premise NTP server)

As per the template the FW is deployed wth a backend loadbalancer with an IP of 172.19.16.36. No frontend-lb has been deployed

My NTP server sits on-premise network with IP address of 10.64.17.10

I have added the route for NTP server on the firewall pointing towards 172.19.16.33 (first IP on the inside eth1 subnet)

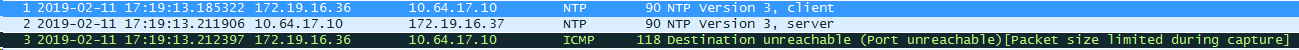

However I see a strange behaviour where the initial NTP packet is sourced from the backend-lb IP address (172.19.16.36) towards NTP server which then replies back to the FW1 IP address (.37) followed by an ICMP unreachable sourced from backend-lb to the NTP server

As soon as I remove the route via eth1 interface (forcing traffic to go out via default route on eth0 interface) I can see bi-directional comms between the FW eth0 interface and the NTP server

15:00:13.292177 IP 172.19.16.20.entextmed > 10.64.17.10.ntp: NTPv3, Client, length 48

15:00:13.317949 IP 10.64.17.10.ntp > 172.19.16.20.entextmed: NTPv3, Server, length 48

However even with this bi-directional comms, the output of show ntp current displays

No server has yet to be synchronized

I have attached wireshark captures from both eth0 and eth1 interface

The end goal here is to get NTP (and all other comms to on-premise network) working via the inside interface

Any ideas ?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Have you configured the necessary UDRs in Azure?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

The UDR has been setup correctly to use the eth1 interface.. I got the traffic flow issue resolved by changing the eth1 to be just a Syn interface and eth0 to be the cluster interface with cluster ip set to virtual-ip on eth0 interface .. So we now see two-way traffic between gateway and the NTP server

However the clock still wouldn't sync as the offset and jitter are too high..

Here is the output from the firewall

[Expert@fw1:0]# ntpq -p

remote refid st t when poll reach delay offset jitter

==============================================================================

10.112.17.10 169.254.0.1 3 u 41 64 377 18.479 -224130 6225.18

10.64.17.10 195.66.241.3 2 u 48 64 377 25.672 -223593 4348.23

ntpq> associations

ind assID status conf reach auth condition last_event cnt

===========================================================

1 14834 9024 yes yes none reject reachable 2

2 14835 9024 yes yes none reject reachable 2

ntpq> rv 14834

assID=14834 status=9024 reach, conf, 2 events, event_reach,

srcadr=10.112.17.10, srcport=123, dstadr=172.19.16.37, dstport=123,

leap=00, stratum=3, precision=-23, rootdelay=10.849,

rootdispersion=6.653, refid=169.254.0.1, reach=377, unreach=0, hmode=3,

pmode=4, hpoll=6, ppoll=6, flash=400 peer_dist, keyid=0, ttl=0,

offset=-2241300.599, delay=18.479, dispersion=4.444, jitter=4674.461,

reftime=e00fa28e.f5e2a217 Thu, Feb 14 2019 8:17:18.960,

org=e00fa2a3.f188753b Thu, Feb 14 2019 8:17:39.943,

rec=e00fab6d.355bf748 Thu, Feb 14 2019 8:55:09.208,

xmt=e00fab6d.304cedeb Thu, Feb 14 2019 8:55:09.188,

filtdelay= 19.71 20.36 19.22 19.35 18.48 18.71 18.72 18.90,

filtoffset= -224925 -224710 -224477 -224295 -224130 -223959 -223786 -223615,

filtdisp= 0.00 0.98 1.97 2.96 3.90 4.89 5.85 6.84

ntpq>

Spoken to TAC and they reckon we need Hotfix for this as per sk105862 but I am not sure as I expect the clocks to sync initially and then drift but in my case its not syncing at all to start with

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

What happens if you use the command ntpdate first to sync the clocks?

This should do a one-time sync of the clocks regardless of the drift involved.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

ntpdate does the trick but the clocks then start drifting shortly

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Maybe add a cronjob that periodically calls ntpdate?

TAC would have to get involved to troubleshoot ntp not syncing properly.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Indeed TAC did get involved and provided a Hotfix as we were hitting the bug outlined in sk105862…once applied the clocks are syncing OK now with NTP server

Thanks