- Products

- Learn

- Local User Groups

- Partners

- More

This website uses Cookies. Click Accept to agree to our website's cookie use as described in our Privacy Policy. Click Preferences to customize your cookie settings.

- Products

- Learn

- Local User Groups

- Upcoming Events

- Americas

- EMEA

- Czech Republic and Slovakia

- Denmark

- Netherlands

- Germany

- Sweden

- United Kingdom and Ireland

- France

- Spain

- Norway

- Ukraine

- Baltics and Finland

- Greece

- Portugal

- Austria

- Kazakhstan and CIS

- Switzerland

- Romania

- Turkey

- Belarus

- Belgium & Luxembourg

- Russia

- Poland

- Georgia

- DACH - Germany, Austria and Switzerland

- Iberia

- Africa

- Adriatics Region

- Eastern Africa

- Israel

- Nordics

- Middle East and Africa

- Balkans

- Italy

- Bulgaria

- Cyprus

- APAC

- Partners

- More

- ABOUT CHECKMATES & FAQ

- Sign In

- Leaderboard

- Events

AI Security Masters E7:

How CPR Broke ChatGPT's Isolation and What It Means for You

Blueprint Architecture for Securing

The AI Factory & AI Data Center

Call For Papers

Your Expertise. Our Stage

Good, Better, Best:

Prioritizing Defenses Against Credential Abuse

Ink Dragon: A Major Nation-State Campaign

Watch HereCheckMates Go:

CheckMates Fest

Turn on suggestions

Auto-suggest helps you quickly narrow down your search results by suggesting possible matches as you type.

Showing results for

- CheckMates

- :

- Products

- :

- Hybrid Mesh

- :

- Cloud Firewall

- :

- Discussion

- :

- Can we avoid the promiscuous mode for vSEC cluster...

Options

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

Turn on suggestions

Auto-suggest helps you quickly narrow down your search results by suggesting possible matches as you type.

Showing results for

Are you a member of CheckMates?

×

Sign in with your Check Point UserCenter/PartnerMap account to access more great content and get a chance to win some Apple AirPods! If you don't have an account, create one now for free!

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Can we avoid the promiscuous mode for vSEC clustering ?

I work since few weeks on the virtualization of checkpoint security gateways. And to allow HA protocol (CCP) in order to create a clusterXL, I had to enabled the promiscuous mode on vmware.

So I was wondering if there was not another solution.

If not, is there some best pratices to avoid route causes on datacenters (packet loss for example) ?

23 Replies

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I'm not sure what you mean by "route causes."

In general, the CCP packets (which are Multicast by default) are there to determine reachability/availability of the cluster members on interfaces.

You can potentially switch ClusterXL mode to Broadcast mode: How to set ClusterXL Control Protocol (CCP) in Broadcast / Multicast mode in ClusterXL

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Actually it may not be the right term.

In order "to determine reachability/availability of the cluster members on interfaces", we must authorize the promiscuous mode on the vSwitch in VMware (both Broadcast and Multicast)

And I have some packet loss in my datacenter due to this mode , so I search some best practices to avoid this mode or reduice its impact.

But I didn't find yet informations about this (in forum or in CP docs).

For information, we use vSphere 5.5.

Maybe you have another idea ?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Unfortunately, ClusterXL in its various forms requires multicast or broadcast packets, so this mode is required.

Its use is commensurate with the amount of traffic being passed by the cluster.

Perhaps you can limit it's impact by reducing the number of devices directly connected to the same vSwitches as the vSEC instances.

As this sounds like a VMware issue, have you engaged with them at all?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

You have perfectly right. It's indeed a VMware issue and it would seem that we must upgrade our vSphere plateform to version 6.

With v6 we could use multicast without promiscuous mode but I would have liked to have Checkpoint confirmation that this is the best practice.

By the way thanks for your response.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

The packet loss you are referring to may be due to the broadcast control configured on physical switches your ESXi servers are connected to.

Please verify if there are any settings limiting broadcast set on the ports corresponding to NICs that have port groups and vSwitches assigned to the ClusterXL members.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Thank you, I will check this lead with the virtualization infrastructure team.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hello , Is there any way to avoid promiscuous mode with R80.20 or R80.30?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Why do you need promiscuous mode? Do you have VMAC enabled?

Also, CCP supports unicast mode of operation as of R80.30 (need to configure it).

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

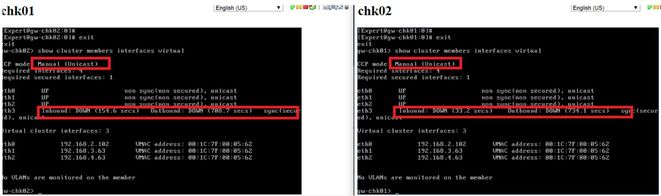

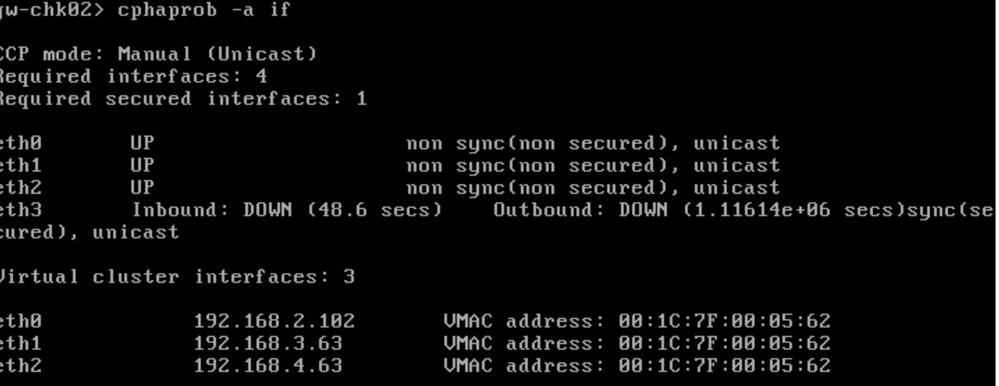

Hello I tried with R80.20, I configured unicast mode, but the sync lync still showing down, I read that promiscuos mode still mandatory to syncronize the cluster, if you have any material or configuration manuals will be great.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

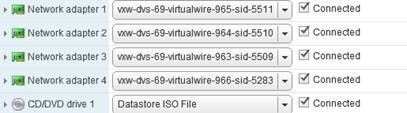

Hello two gw with dvswitch , its configured as unicast, the sync interface remains down. This lab is with version r80.20

Each interface has its own portgroup.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

@Pablo_Barriga , each interface or each pair of interfaces?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Each network adapter has its own portgroup.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

@Pablo_Barriga , what i am trying to determine if your Sync interfaces of both cluster member are sharing the same portgroup. They should.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Yes each gw share the same portgroup with their segments, we have IP connectivity with all the IP address of each interface connected, but the sync still down. I haven´t try VMAC enabled yet

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Are the vSECs on the same host or on two different hosts?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Different hosts

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I suggest v-motioning the vSECs to the same, verifying that it works and if it does, moving them back to separate hosts and looking at the portgroup/dvswitch/physical switch to see where its getting lost.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hello both gws are on the same host, but the cluster remains down, VMAC enabled.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I would involve TAC to resolve this...

CCSP - CCSE / CCTE / CTPS / CCME / CCSM Elite / SMB Specialist

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hello, they told me to activated promiscuous mode, thats quite complicated because its a vcloud infraestructure. I'm going to try to replicate the problem with other versions.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I think you also need to check the port security settings, this has many times been the culprit for us.

Regards, Maarten

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

So far everything is quite secure, looks like I'll have to use an ADC for load balacing

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

For anything visiting here and in VMWare:

I am on my 3rd lab. The first was done with open source OPNSense (based on PFSense) with its own CARP/VRRP implementation. Network design remained the same throughout all 3 labs and so did the VMWare kit it was hosted on.

I dealt with the promiscious thing in OPNSense, where the implementation is far more finicky. It appears it used the "virtual mac" option and therefore required me to create separate vSwitches just for the router MGMT interface for each network I wanted it to participate in (= double the networks).

I tested this for several hours and while I stopped short of enabling VirtualMac on the CP Cluster in my labs (for the dbl network reason and sticking whole switches in promisc mode isn't my idea of fun) - I CAN 100% CONFIRM Cluster VIPs WORK IN vSwitches that are not in promiscious mode. I had both broadcast and unicast succeeding. (The cluster picked broadcast mode when the dedicated sync interface had no IPs assigned and unicast when they got a /30 in between themselves). I'm on ESX 6.7 but I doubt that matters. It's a under-the-hood VxLan bridging/networking thingie, not inherently a VMWare problem.

Leaderboard

Epsum factorial non deposit quid pro quo hic escorol.

| User | Count |

|---|---|

| 7 | |

| 4 | |

| 3 | |

| 2 | |

| 2 | |

| 2 | |

| 1 | |

| 1 |

Upcoming Events

Tue 28 Apr 2026 @ 06:00 PM (IDT)

Under the Hood: Securing your GenAI-enabled Web Applications with Check Point WAFThu 30 Apr 2026 @ 03:00 PM (PDT)

Hillsboro, OR: Securing The AI Transformation and Exposure ManagementTue 28 Apr 2026 @ 06:00 PM (IDT)

Under the Hood: Securing your GenAI-enabled Web Applications with Check Point WAFTue 12 May 2026 @ 10:00 AM (CEST)

The Cloud Architects Series: Check Point Cloud Firewall delivered as a serviceThu 30 Apr 2026 @ 03:00 PM (PDT)

Hillsboro, OR: Securing The AI Transformation and Exposure ManagementAbout CheckMates

Learn Check Point

Advanced Learning

YOU DESERVE THE BEST SECURITY

©1994-2026 Check Point Software Technologies Ltd. All rights reserved.

Copyright

Privacy Policy

About Us

UserCenter