- CheckMates

- :

- Products

- :

- CloudMates Products

- :

- Cloud Network Security

- :

- Discussion

- :

- NGTX in AWS

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

Are you a member of CheckMates?

×- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

NGTX in AWS

Is anyone else here running a clustered NGTX gateway in AWS? Are there any pros or cons over running on-prem on appliances?

I have a customer with a reasonably complex network and using all NGTX blades wanting to move their core gateway and Internet breakout to AWS. Has anyone come across any gotcha's that I should be aware of?

- Tags:

- aws ngtx

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

This should work, but the client should consider the cost of the traffic they are going to pipe through the gateway in AWS environment.

Unlike in on-premises implementation you are liable for the consumption of the bandwidth.

Take a look at his, for some of the data:

Getting a Grip on Your AWS Data Transfer Costs

There is also a question of either Direct Connect or VPN from on-premises to AWS and the costs assotiated with that leg of the transfer.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

We are not running any in prod within AWS but some things I did run into when doing our labs. If any of these are incorrect maybe someone from CP can correct me.

- Cluster fail-over uses AWS api to move the gateway interfaces/ip so you will have more downtime than in a physical ClusterXL environment. Takes up to 40sec for failover

- Determine how many static NAT addresses are needed because each AWS instance type has a limit on the number of secondary ip addresses you can have per interface. This is how NAT works with CP and AWS. You map an Elastic IP to a private IP that is assigned as a secondary ip address to the gateways external interface.

-If you plan on managing access to aws services such as RDS, you have to create NAT rules to in order to have traffic from protected CP subnets traverse the file and access the AWS services. This is because you cannot turn off the SRC/DST check of an AWS service so it drops the traffic. This is only if an EC2 instance is on a different subnet than the AWS service it is connecting to.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

If you're going to do that, why not use CloudGuard NSaaS?

CC: Tomer Sole

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

It may seem like the obvious thing to call out but transitive routing isn't natively supported by AWS so your design should consider what this means (VPNs / Proxy etc).

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Great point, thanks Chris Atkinson. I saw this doc which confirms what you said. Unsupported VPC Peering Configurations - Amazon Virtual Private Cloud

I'll speak to the customer and get their AWS contractor to advise. Maybe this project is a non starter.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

As Vladimir suggest Check Point provide templates that take this into account, hopefully one can help with your customers use case.

We're also constantly working to simplify and evolve these based on new AWS functionalities where relevant.

CloudGuard for AWS - Transit VPC Architecture

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

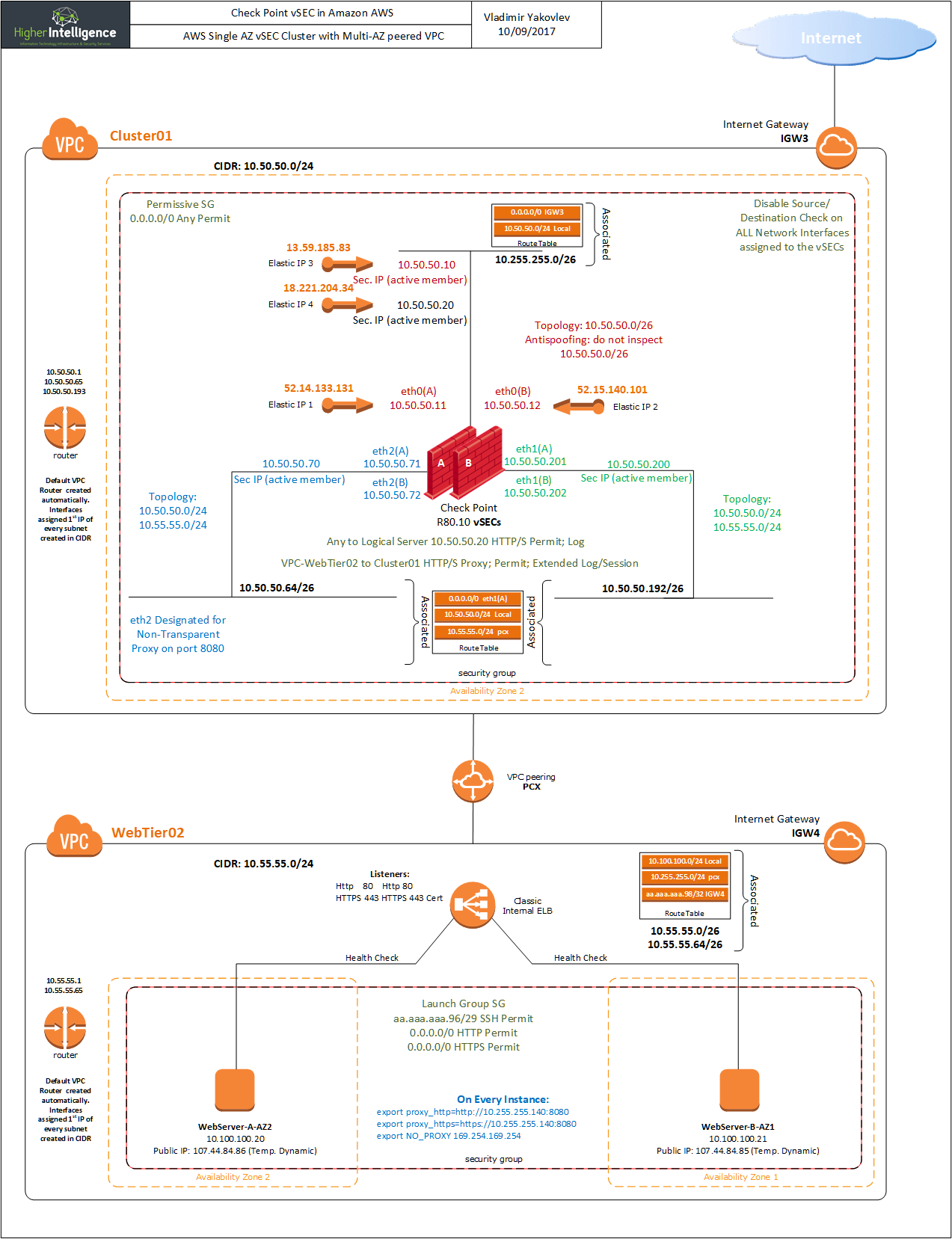

Matt Dunn, take a look at this post CloudGuard (vSEC) deployment scenarios in AWS and see if any of the described scenarios may be able to address your client's requirements.

Few of them are dealing specifically with non-transitive properties of AWS.

In addition to those, there is a Check Point Transit VPC available as a CloudFormation template that addresses most of the limitations, albeit in a non-clustered, but redundant multi-zone fashion. I.e. your failover will be shorter than 40 seconds in a clustered environment and will depend on the BGP convergence speed.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I've just spoken to the customer again briefly. We're having a whiteboard session next week with their AWS provider to thrash out a design.

From the link Vladimir Yakovlev shared before, I think the closest to our proposed design is the last diagram:

AWS will essentially be the core/hub. None of the branch sites need to communicate with each other, but they will all have Direct Connect up to AWS, and they will have one firewall in AWS that all sites go through to get to the Internet.

Apparently in December last year AWS introduced a new transit VPC which now allowed sites to route through AWS on their way to another destination (i.e. the Internet), so maybe that will be good enough to do what we need it to? I'll find out more next week...

Matt

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

The solution Amazon announced was this, it certainly has the potential to simplify things.

AWS Transit Gateway - Amazon Web Services