- Products

Quantum

Secure the Network IoT Protect Maestro Management OpenTelemetry/Skyline Remote Access VPN SD-WAN Security Gateways SmartMove Smart-1 Cloud SMB Gateways (Spark) Threat PreventionCloudGuard CloudMates

Secure the Cloud CNAPP Cloud Network Security CloudGuard - WAF CloudMates General Talking Cloud Podcast - Learn

- Local User Groups

- Partners

- More

This website uses Cookies. Click Accept to agree to our website's cookie use as described in our Privacy Policy. Click Preferences to customize your cookie settings.

- Products

- Quantum (Secure the Network)

- CloudGuard CloudMates

- Harmony (Secure Users and Access)

- Infinity Core Services (Collaborative Security Operations & Services)

- Developers

- Check Point Trivia

- CheckMates Toolbox

- General Topics

- Infinity Portal

- Products Announcements

- Threat Prevention Blog

- CheckMates for Startups

- Learn

- Local User Groups

- Upcoming Events

- Americas

- EMEA

- Czech Republic and Slovakia

- Denmark

- Netherlands

- Germany

- Sweden

- United Kingdom and Ireland

- France

- Spain

- Norway

- Ukraine

- Baltics and Finland

- Greece

- Portugal

- Austria

- Kazakhstan and CIS

- Switzerland

- Romania

- Turkey

- Belarus

- Belgium & Luxembourg

- Russia

- Poland

- Georgia

- DACH - Germany, Austria and Switzerland

- Iberia

- Africa

- Adriatics Region

- Eastern Africa

- Israel

- Nordics

- Middle East and Africa

- Balkans

- Italy

- APAC

- Partners

- More

- ABOUT CHECKMATES & FAQ

- Sign In

- Leaderboard

- Events

May the 4th (+4)

Roadmap Session and Use Cases for

Cloud Security, SASE, and Email Security

SASE Masters:

Deploying Harmony SASE for a 6,000-Strong Workforce

in a Single Weekend

Paradigm Shifts: Adventures Unleashed!

Capture Your Adventure for a Chance to WIN!

Mastering Compliance

Unveiling the power of Compliance Blade

CPX 2024 Content

is Here!

Harmony SaaS

The most advanced prevention

for SaaS-based threats

CheckMates Go:

CPX 2024 Recap

Turn on suggestions

Auto-suggest helps you quickly narrow down your search results by suggesting possible matches as you type.

Showing results for

- CheckMates

- :

- Products

- :

- Quantum

- :

- Security Gateways

- :

- Re: Interface Affinity with VSX

Options

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

Turn on suggestions

Auto-suggest helps you quickly narrow down your search results by suggesting possible matches as you type.

Showing results for

Are you a member of CheckMates?

×

Sign in with your Check Point UserCenter/PartnerMap account to access more great content and get a chance to win some Apple AirPods! If you don't have an account, create one now for free!

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Interface Affinity with VSX

Hi,

I've been trying to understand this for a while now, but realised that the more I think I know the answer, the less confident I am!

Simply, under VSX, how is interface affinity achieved? For example, on a 23500 how can I assign 4 SNDs to a bond of four 10GB interfaces and individual SNDs to individual 10GB NICs? From reading around, I have become confused and some sources seem to imply that all interfaces are handled by VS0. If that is the case, do I just assign the correct number of CPU cores to VS0 via cpconfig and the use 'sim affinity -s' as normal (in VS0 context).

Or have I got it completely wrong?

Thanks,

Dave

12 Replies

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi,

sim affinity allows you to assign one interface to one SND, on the other the hand Multi-Queue will allow you to assign multiple SND's to one interface.

More info about Multi-Queue can be read in sk98348 - under "Best practices - Multi-Queue" section.

Indeed on VSX the SND's are under VS0 so the sim affinity and Multi-Queue must be defined under context of VS0.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi Ilya,

Thanks for confirming that. I'm ignoring multi-queue for the moment because I'm not sure it'll be needed. It is a 40Gbit bond (4x10Gbit NIC) in LACP so I was was looking to start with 1 SND per bond member and then see how well that works.

As an example.... If I have a 48 core appliance running VSX with 5 VSes and (through SmartConsole) I assign each VS with 8 coreXL instances, that leave 8 CPU cores NOT assigned to a VS. Will these automatically be used for VS0/interface affinity etc, or do I need to explicitly assign 8 cores via cpconfig?

Thanks,

Dave

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi Dave,

You need to distinguish between Kernel Mode and VSX mode, in Kernel mode when we enable CoreXL each Instance is correlated to specific CPU, while in VSX Instance it means new fwk thread (User Space Process).

So in 23500 by default you will have 4 SND's and the rest will be for user space processes, that means all fwk's processes from all VS's will share the left CPU's (In 23500 by default will be 44).

For example on 15600:

[Expert@VSX_211:0]# fw ctl affinity -l

Mgmt: CPU 0

Sync: CPU 0

VS_0 fwk: CPU 2 3 4 5 6 7 8 9 10 11 12 13 14 15 18 19 20 21 22 23 24 25 26 27 28 29 30 31

VS_1 fwk: CPU 2 3 4 5 6 7 8 9 10 11 12 13 14 15 18 19 20 21 22 23 24 25 26 27 28 29 30 31

VS_2 fwk: CPU 2 3 4 5 6 7 8 9 10 11 12 13 14 15 18 19 20 21 22 23 24 25 26 27 28 29 30 31

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi Ilya,

Ah, I think I understand.

Example 1:

So, if I have a 23500 with a VS0 (with a single coreXL instance) and a single 'data' VS with 8 coreXL instances, the default assignment would be:

1) 4 CPUs for SND

2) 44 CPUs for VSes (in this case 1 will be used for VS0 and 8 used for VS2 and 35 CPUs 'free')

Correct?

Example 2:

I want to have 6 SNDs - 1 for Mgmt; 1 for Sync and 4 for a 4x10Gbit bond. I also want 2 coreXL instances for VS0; 8 coreXL instances for VS2 and 10 coreXL instances for VS3.

To achieve this, I...

1) In VS0 context I use cpconfig to assign 6 cores to VS0.

2) I then use sim affinity -s to assign these cores for the individual interfaces

3) Under each VS in SmartConsole I set the require number of coreXL instances and these are shared between the 42 remaining cores

To me, this sounds wrong.

I guess my confusion is that - to assign SNDs on a non-VSX platform - I simply assign CPUs to CoreXL in cpconfig and all left over CPUs are available for SND. I do not know how to 'guarantee' SND cores in VSX.

Any help really appreciated 🙂

Dave

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi Dave,

You still confused 🙂

i will try to explain, FW instances in VSX are processes that are spread between all CPU's that are not SND's

for example:

i have VS with 4 instances, as you can see below each instance fall on all non-SND's cores:

[Expert@VSX_211:1]# fw ctl multik stat

ID | Active | CPU | Connections | Peak

----------------------------------------------

0 | Yes | 2-15+ | 3 | 21

1 | Yes | 2-15+ | 1 | 4

2 | Yes | 2-15+ | 0 | 3

3 | Yes | 2-15+ | 2 | 6

To be able to increase number of SND's you should do manual affinity for all VSs,

in my case i have VS0 + 2 VSs to get more SND's do the following:

[Expert@VSX_211:0]# fw ctl affinity -s -d -vsid 0-2 -cpu 10-15

There are configured processes/FWK instances

(y) will override all currently configured affinity and erase the configuration files

(n) will set affinity only for unconfigured processes/threads

Do you want to override existing configurations (y/n) ? y

VDevice 0-2 : CPU 10 11 12 13 14 15 - set successfully

The new free CPU's may now be configured as SND by assigning interface affinity to them.

i hope it's clear now 🙂

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi Ilya,

Ah, now I understand! That was the missing piece: in order to change the default number of SNDs that are available, I need to use fw ctl affinity to reduce the total number of cores for VSes. Whatever is left is a potential SND candidate.

To make sure I do understand, here is an extreme example!

23500 with 48 cores. I want three VSes - VS0 with 4 coreXL; VS1 with 10 coreXL and VS2 with 10 coreXL. So, I could assign 24 cores to coreXL...

fw ctl affinity -s -d -vsid 0-2 -cpu 24-48

Leaving 24 CPUs 'free' and then use sim affinity commands to assign individual CPU cores to interfaces.

Yes, 24 cores for SND is pointless and even with multi-queue unlikely to ever be needed, but I could do it?

Oh, I suppose that there is one other question: You mentioned that the CoreXL cores are spread between the VSes. So in my example above each CPU core 24 to 48 would have an active instance running as the total number of coreXL instances is 4+10+10. But if I had VS1 only configured for 5 coreXL instances that would be 4+5+10, so there would be 24 possible coreXL instances, but only 16 used coreXL instances?

After reading Michael Endrizzi's tuning guide, I can see that it is possible to assign different VS coreXL instances to individual CPUs, but it seems like there is usually no point to do so? You'd likely end up breaking things more than tuning them,

Thanks,

Dave

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Yes Dave, you understand the assignment the correct way.

Assiging VS coreXL instances to individual CPUs gives you the possibility to seperate the VS by CPUs. If you do this for all the coreXL instances of a VS, one high utilization VS can't affect another one.

Kaspars did a nice presentation at CPX for VSX in real life and explained very well the CPU distribution. Have look at this too

VSX Performance Optimization from Kaspars Zibarts

Wolfgang

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi Wolfgang,

Good, I finally got there 🙂

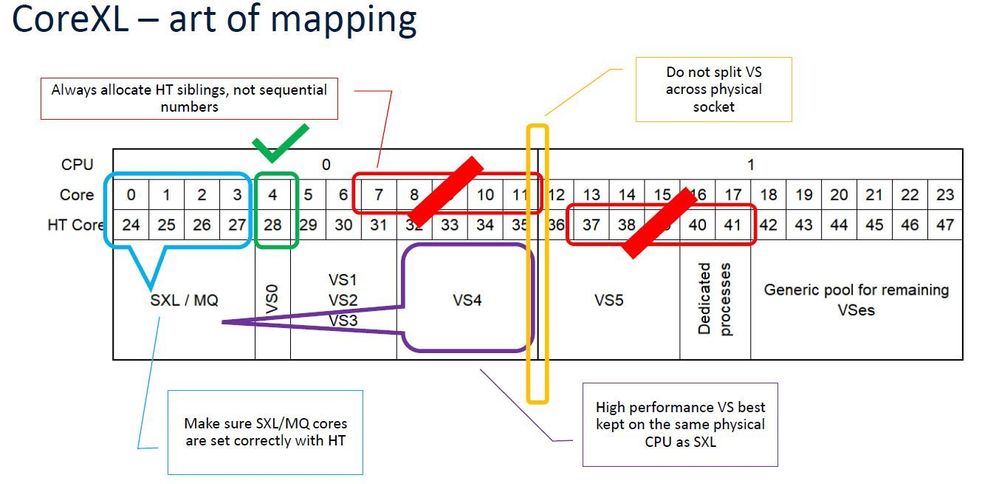

I had a look at the PDF you suggested. Unfortunately it was obviously written to support the presentation (which looks like it was a good one), so I'm missing a lot of the details. The slide below caught my attention:

Kaspars mentions two interesting points - (1) Allocate sibling HTs to VSes and (2) do not split a VS over different CPUs. I assume that this to ensure that cache data remains available to each core of the VS where possble?

I would have thought that you need to be careful about assigning sibling HTs to SND. On the positive, in the example above if data arrives on eth1 and exits on eth2 then assigning core 0 to eth1 and core 24 to eth2 makes sense as the same cache is used, but for multi-queue would it not be better to use non-sibling cores for each queue as this assigns a physical compute resource to each queue. I would have though sibling HTs and multi-queue would be bad, not good?

Also, in Check Point Land, is it always physical cores numbered first then HT cores, so on 15xxx it would be physical 0-15, HT 16-31?

Also, why keep a high-utilisation VS on the same as (presumably) it's own SNDs? Again, is this simply to avoid moving data from one cache to another?

Thanks,

Dave

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hello Dave,

yes, it is a good starting point with one SND for every NIC in your 4-Port bond.

But if you really using 10Gbit (meaning a lot of traffic is passing your gateway) you should think about Multi-Queue. With Multi-Queue you can assign more then one CPU to a NIC.

Maybee you have to change the default relationship beetween NICs and CPUs. Current assignment is shown via "fw ctl affinity -l" You should avoid having SNDs and fw_workers running on the same CPU.

You'll find all and the best recommendations in Heikos link collection for performance tuning High Performance Gateways and Tuning

Wolfgang

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi Wolfgang,

Yes, you're absolutely right but I think I'm happy with configuring multi-queue as I understand it is the same on both VSX and 'normal' gateways. I'm ignoring multi-queue in this thread as I didn't want is to confuse the VSX question.

Thanks,

Dave

Yes, you're absolutely right but I think I'm happy with configuring multi-queue as I understand it is the same on both VSX and 'normal' gateways. I'm ignoring multi-queue in this thread as I didn't want is to confuse the VSX question.

Thanks,

Dave

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi,

I've been doing some reading on these topics and can;t find the answer to one question:

If I enable multi-queue on a 10 Gbit interface and set it to a queue depth of two (cpmq set rx_num ixgbe 2) that should give me two rx queues on different CPUs. But, I want to make theses rx queues to be on specific CPUs (for example CPU 1 and CPU 21) as they are hyperthreads.

I cannot find any way to do this. Can someone help please?

Thanks,

Dave

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

IMHO it should get automatically, post cpmq get -vv

Leaderboard

Epsum factorial non deposit quid pro quo hic escorol.

| User | Count |

|---|---|

| 14 | |

| 10 | |

| 8 | |

| 6 | |

| 5 | |

| 5 | |

| 3 | |

| 3 | |

| 3 | |

| 3 |

Upcoming Events

Thu 02 May 2024 @ 10:00 AM (CEST)

CheckMates Live BeLux: How Can Check Point AI Copilot Assist You?Thu 02 May 2024 @ 04:00 PM (CEST)

CheckMates Live DACH - Keine Kompromisse - Sicheres SD-WANThu 02 May 2024 @ 10:00 AM (CEST)

CheckMates Live BeLux: How Can Check Point AI Copilot Assist You?Thu 02 May 2024 @ 04:00 PM (CEST)

CheckMates Live DACH - Keine Kompromisse - Sicheres SD-WANAbout CheckMates

Learn Check Point

Advanced Learning

YOU DESERVE THE BEST SECURITY

©1994-2024 Check Point Software Technologies Ltd. All rights reserved.

Copyright

Privacy Policy

Facts at a Glance

User Center