- Products

Quantum

Secure the Network IoT Protect Maestro Management OpenTelemetry/Skyline Remote Access VPN SD-WAN Security Gateways SmartMove Smart-1 Cloud SMB Gateways (Spark) Threat PreventionCloudGuard CloudMates

Secure the Cloud CNAPP Cloud Network Security CloudGuard - WAF CloudMates General Talking Cloud Podcast - Learn

- Local User Groups

- Partners

- More

This website uses Cookies. Click Accept to agree to our website's cookie use as described in our Privacy Policy. Click Preferences to customize your cookie settings.

- Products

- Quantum (Secure the Network)

- CloudGuard CloudMates

- Harmony (Secure Users and Access)

- Infinity Core Services (Collaborative Security Operations & Services)

- Developers

- Check Point Trivia

- CheckMates Toolbox

- General Topics

- Infinity Portal

- Products Announcements

- Threat Prevention Blog

- CheckMates for Startups

- Learn

- Local User Groups

- Upcoming Events

- Americas

- EMEA

- Czech Republic and Slovakia

- Denmark

- Netherlands

- Germany

- Sweden

- United Kingdom and Ireland

- France

- Spain

- Norway

- Ukraine

- Baltics and Finland

- Greece

- Portugal

- Austria

- Kazakhstan and CIS

- Switzerland

- Romania

- Turkey

- Belarus

- Belgium & Luxembourg

- Russia

- Poland

- Georgia

- DACH - Germany, Austria and Switzerland

- Iberia

- Africa

- Adriatics Region

- Eastern Africa

- Israel

- Nordics

- Middle East and Africa

- Balkans

- Italy

- APAC

- Partners

- More

- ABOUT CHECKMATES & FAQ

- Sign In

- Leaderboard

- Events

May the 4th (+4)

Roadmap Session and Use Cases for

Cloud Security, SASE, and Email Security

SASE Masters:

Deploying Harmony SASE for a 6,000-Strong Workforce

in a Single Weekend

Paradigm Shifts: Adventures Unleashed!

Capture Your Adventure for a Chance to WIN!

Mastering Compliance

Unveiling the power of Compliance Blade

CPX 2024 Content

is Here!

Harmony SaaS

The most advanced prevention

for SaaS-based threats

CheckMates Go:

CPX 2024 Recap

Turn on suggestions

Auto-suggest helps you quickly narrow down your search results by suggesting possible matches as you type.

Showing results for

- CheckMates

- :

- Products

- :

- Quantum

- :

- Security Gateways

- :

- Re: New! R80.30 feature: Management Data Plane Sep...

Options

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

Turn on suggestions

Auto-suggest helps you quickly narrow down your search results by suggesting possible matches as you type.

Showing results for

Are you a member of CheckMates?

×

Sign in with your Check Point UserCenter/PartnerMap account to access more great content and get a chance to win some Apple AirPods! If you don't have an account, create one now for free!

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Jump to solution

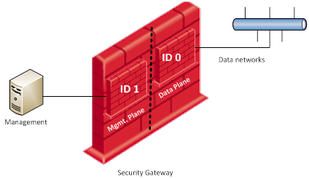

New! R80.30 feature: Management Data Plane Separation (for gateways with 4+ cores)

I really like the all new R80.30 feature for separating management from data traffic via

- Routing Separation and

- Resource Separation

as described in sk138672.

Did anyone test this already?

3 Solutions

Accepted Solutions

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Check on the sk for:

- Controlling amount of CPU's for Resource separation:

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Standalone or management is not supported with mdps

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi Aviad,

My question is that it makes sense to open a service request.

On sk138672 it refers only Quantum appliances. I am asking this question because on the Open Servers I can see that the commands exist, but maybe they do not work.

If the SK only refers Quantum appliances I am afraid that TAC closes the SR just saying that ...

But I don see any reason for this not be possible on Open Servers ...

98 Replies

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

it is about time! finally arrived.

will test it soon and report back 🙂

will test it soon and report back 🙂

Jerry

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

About time! This is a long over due feature!

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

"Use of logical interfaces is not suppoted on management interface (Alias, Bridge, VPN Tunnel, 6in4 Tunnel, PPPoE, Bond, VLAN)"

1. It is a pity. Showstopper for us.

2. There is typo (suppoted -> supported).

Kind regards,

Jozko Mrkvicka

Jozko Mrkvicka

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Very interesting information.

I will test it tomorrow in our LAB:-)

Thank you!

➜ CCSM Elite, CCME, CCTE

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

With Resource Separation the cpu load should not rise when installing the policy. Is that correct?

➜ CCSM Elite, CCME, CCTE

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi Dameon,

Do I need a license for the management instance or lose a core license?

Regards

Heiko

➜ CCSM Elite, CCME, CCTE

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

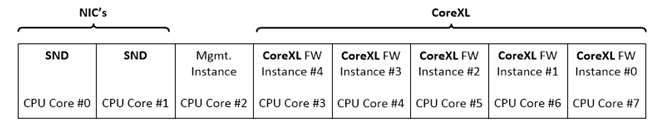

I assume this dedicated CPU core is treated like any other core: you need a license for it. A minimum of 8 CPU cores are required to use this feature, which means your Open Server license must be for at least 8 cores. Beyond that, no special licensing requirements.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

So anything below 5900 will not be able to take advantage of it...

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Sounds about right.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Danny, you may want to change the heading by adding "for gateways with 8 or more cores".

Otherwise it leads to unwarranted euphoria 🙂

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Added.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I do have a concern about the best practices from the article: https://supportcenter.checkpoint.com/supportcenter/portal?eventSubmit_doGoviewsolutiondetails=&solut...

"Connectivity to the LDAP and similar servers from the Gateway should be done via the Data Plane."

I've always been told that only the management/control plane of a security gateway should be making or allowing connections to the device. The data plane should not allow or make connections, it should only play the role of traffic cop.

What is everyone's thoughts on this?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

FYI this was changed to min 4 recently

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Thanks for the info. I changed the thread title from 8 to 4 CPU cores.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I tested and worked successfully. (resource&routing both enabled)

It's not possible to use mgmt ip on identity collector and i think it should be.

Could you please add a service/task for this issue?

XXXXXXXXX:TACP-0> show mdps state

Management Data plane separation:

Routing plane: Enabled

Dedicated resource: Enabled (FW-Instance [39,38], CPU [4,28])

Management interface: Mgmt

Sync interface: Sync

Management plane configured routes:

default via X.X.X.X

XXXXXXXXX:TACP-0> show mdps tasks

Management plane tasks:

Service: cpri_d

Service: ntpd

Service: sshd

Service: syslog

Process: cloningd

Process: httpd2

Process: ntpd

Process: snmpd

Process: snmpmonitor

Port;Protocol: 256;tcp

Port;Protocol: 257;tcp

Port;Protocol: 2010;tcp

Port;Protocol: 5432;tcp

Port;Protocol: 18181;tcp

Port;Protocol: 18183;tcp

Port;Protocol: 18184;tcp

Port;Protocol: 18187;tcp

Port;Protocol: 18191;tcp

Port;Protocol: 18192;tcp

Port;Protocol: 18195;tcp

Port;Protocol: 18210;tcp

Port;Protocol: 18211;tcp

Port;Protocol: 18264;tcp

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I tried mdps in the lab. I have two issues so far:

1. Can't make backup traffic to go over Mgmt interface, it attempts ssh connections on one of the data interfaces instead, even if I try to backup gateway to a management appliance.

2. TACACS traffic on port 49 goes over Mgmt interface during initial login the the gateway, which is expected, then it attempts to go over data interface for some reason during 2nd authentication when I try escalate my privileges from TACP-0 to TACP-15.

These two issues are show stoppers for us to deploy this feature.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

We have exactly the same issue with R80.40 latest ongoing JHF:

1. Identity Collector planned to communicate with different firewalls in different zones and the only common network is the mgmt

2. We also can't apply JHF using CPUSE GUI, just CLI

3. Lastly, the default route disappears occasionally after a reboot

4. Only one sync is supported

Any light at the end of the tunnel? I wish it would work more like a routing domain with more flexibility.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi Alex,

Can you share the following please?

'dbget -rv mdps'

Also why do you need more than once interface for Sync? you can use link aggregation (bond) instead.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi Aviad,

We wanted to have another Vlan as 2nd sync, already have the dedicated sync as a bond.

Here is the outputs you requested, as you can see we put "0.0.0.0" and default as the default used to drop off occasionally.

Would good to understand if the Identity Collector can work with the management plane rather than data plane, this is the most major thing for us, the rest looks like bugs which will get fixed eventually.

[Expert@BNZ_WDC3-FW1-Access-011:0]# dbget -rv mdps

mdps:interface:management eth0

mdps:interface:sync bond0

mdps:route:0.0.0.0/0:nexthop:10.x.x.x t

mdps:route:default:nexthop:10.x.x.x t

Thanks

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Please move clish to mplane environment:

'set mdps environment mplane'

Alternatively you can move confd to mplane permanently via 'add mdps task process confd' (requires reboot)

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi Ramazan,

Currently it's a limitation which is documented on the SK, I would suggest consulting solution center

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi everyone,

I have this in production with a R80.20 gateway and JHF 156. Works well so far except that customer's monitoring tool isn't able to discover data plane interfaces and counters, even if I follow sk138672 and use the "special community string" (..._dplane). Hope this will be gone with the next major upgrade. 😐

Does anyone here has other ideas and/or experience with mdps and interface monitoring using snmp? Could it help to disable resource separation?

Thanks

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi dj0Nz, i strongly recommend using R80.30

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi,

Just a point of clarification because it's not 100% clear in the SK, is this supported on R80.30 on both 2.6 and 3.10 kernels or only on 3.10? I interpret the minimum requirements as requiring JHF Take 136 if using kernel 3.10 but because there is no mention of 2.6 it's unclear if the original R80.30 GA supports this or if there is no support on 2.6.

Thanks and Regards,

Ben

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi Ben,

MDPS is supported for R80.30 (2.6 and 3.10).

For 3.10 the requirement is to use JHF take 136

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Why is the special community "community+_dplane." required ? Is it not possible to show both dataplane and management oids? If not, wouldn't it make more sense to show the dataplane oids and require a special community for the management plane - at the end of the day you want to access through the management plane to monitor the dataplane oids in most of the cases, no?

In sk138672, best practices recommend to run LDAP and similar through the dataplane. Why? I think LDAP/TACACS etc is about management, isn't it?

I wonder if it makes sense to add the tacacs process or tacacs service to the mplane? add mdps task ...

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi @Luis_Miguel_Mig , Thank you for your feedback.

There is another option with SNMP where you don't need to put _dplane in the community name and instead use 1.4 prefix the get data plane OID.

for example: 1.3.6.1.2.1.2.2.1.2 is OID to get interface description, if you use 1.4.6.1.2.1.2.2.1.2 that will return result from data plane.

Regarding your 2nd comment, Currently a limitation, R&D working on solution to support such configuration.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Thanks Aviad,

I don't know what is the problem regarding the data plane oids but I was wondering if checkpoint would consider to keep the data plana oids with the standard community and oid numbers and perhaps changing the management plane oids if required in next GAIA releases?

Do you think the ldap/tacacs solution will be available in the next jumbo? I am working in a lab/test environment at the moment but planning to put it in production soon.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi @Luis_Miguel_Mig You are welcome to get in touch with the solution center in order to get such solution.

Leaderboard

Epsum factorial non deposit quid pro quo hic escorol.

| User | Count |

|---|---|

| 14 | |

| 10 | |

| 8 | |

| 6 | |

| 5 | |

| 5 | |

| 3 | |

| 3 | |

| 3 | |

| 3 |

Upcoming Events

Thu 02 May 2024 @ 10:00 AM (CEST)

CheckMates Live BeLux: How Can Check Point AI Copilot Assist You?Thu 02 May 2024 @ 04:00 PM (CEST)

CheckMates Live DACH - Keine Kompromisse - Sicheres SD-WANThu 02 May 2024 @ 10:00 AM (CEST)

CheckMates Live BeLux: How Can Check Point AI Copilot Assist You?Thu 02 May 2024 @ 04:00 PM (CEST)

CheckMates Live DACH - Keine Kompromisse - Sicheres SD-WANAbout CheckMates

Learn Check Point

Advanced Learning

YOU DESERVE THE BEST SECURITY

©1994-2024 Check Point Software Technologies Ltd. All rights reserved.

Copyright

Privacy Policy

Facts at a Glance

User Center