- CheckMates

- :

- Products

- :

- CloudMates Products

- :

- Cloud Network Security

- :

- Discussion

- :

- Very high RX drops on R80.40 on ESXi

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

Are you a member of CheckMates?

×- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Very high RX drops on R80.40 on ESXi

We’ve discovered what we believe is most likely a feature in the Linux kernel used in R80.40. In a couple of ESXi environments R80.40 devices (SmartCenter and gateways) are showing very high numbers of RX drops on some interfaces. By very I mean as high as 30% (yes, three zero %). Normally RX drops would be a real cause for concern at 1-2%.

In a home lab I’ve played with a few things like:

- Changing the VM NIC from the (R80.40 CloudGuard management image) default of e1000 to VMXNET3 – seemed to make it worse

- Increasing the RX ring buffer from the default 256 to 1024 – no difference at all

- ethtool -S <interface> shows no errors

There’s a Red Hat article (https://access.redhat.com/solutions/657483) which suggests that this is due to new kernel code and could be matching other traffic like IPv6 frames. Based on the ethtool output it is not typical errors.

Has anyone else seen this? Can we get a clearer answer on what is going on and whether it is an issue? If this is the new “normal” it would be very useful to update sk61922 to cover this specifically for R80.40. I am not sure whether it is also an issue with R80.30 with the 3.10 kernel – I am guessing not as it would have been reported before now.

The following is from a lab SmartCenter running a single gateway - so not heavily loaded:

ifconfig

eth0 Link encap:Ethernet HWaddr 00:0C:29:F5:6D:8A

inet addr:a.b.c.d Bcast:a.b.c.255 Mask:255.255.255.0

UP BROADCAST RUNNING MULTICAST MTU:1500 Metric:1

RX packets:1108123 errors:0 dropped:329773 overruns:0 frame:0

TX packets:1648956 errors:0 dropped:0 overruns:0 carrier:0

collisions:0 txqueuelen:1000

RX bytes:791902533 (755.2 MiB) TX bytes:1630948344 (1.5 GiB)

ethtool -S

NIC statistics:

rx_packets: 1108126

tx_packets: 1648971

rx_bytes: 796332181

tx_bytes: 1630950498

rx_broadcast: 0

tx_broadcast: 0

rx_multicast: 0

tx_multicast: 0

rx_errors: 0

tx_errors: 0

tx_dropped: 0

multicast: 0

collisions: 0

rx_length_errors: 0

rx_over_errors: 0

rx_crc_errors: 0

rx_frame_errors: 0

rx_no_buffer_count: 0

rx_missed_errors: 0

tx_aborted_errors: 0

tx_carrier_errors: 0

tx_fifo_errors: 0

tx_heartbeat_errors: 0

tx_window_errors: 0

tx_abort_late_coll: 0

tx_deferred_ok: 0

tx_single_coll_ok: 0

tx_multi_coll_ok: 0

tx_timeout_count: 0

tx_restart_queue: 0

rx_long_length_errors: 0

rx_short_length_errors: 0

rx_align_errors: 0

tx_tcp_seg_good: 0

tx_tcp_seg_failed: 0

rx_flow_control_xon: 0

rx_flow_control_xoff: 0

tx_flow_control_xon: 0

tx_flow_control_xoff: 0

rx_long_byte_count: 796332181

rx_csum_offload_good: 1100864

rx_csum_offload_errors: 0

alloc_rx_buff_failed: 0

tx_smbus: 0

rx_smbus: 0

dropped_smbus: 0

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Your ifconfig and ethtool counters are not in agreement. I would trust the ethtool counters over ifconfig (which is a deprecated command anyway), and ethtool is saying that RX "no buffer" and "misses" (which correlate to RX-DRP) are both zero. What does command ip -s link show eth0 display? No other ethtool counters are showing that interface as struggling whatsoever.

now available at maxpowerfirewalls.com

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

The output of " ip -s link show eth0 " exactly matches the ifconfig output (dropped packets are the same, errors are both zero).

I'm not seeing performance issues, but showing a high number of dropped packets is new with R80.40. As per my original post, this could simply be the new kernel reporting of other drops (e.g. IPv6 etc) as shown in the Red Hat page.

I've changed to VMXNET3 again and (for whatever reason) it is now showing much lower drops - around 1%.

We've noticed this on both ESXi 7.0 and 6.0.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

The "other drops" you are referring to are probably non-IPv4 packets being discarded by the Ethernet driver, does the incrementing of RX-DRP stop for as long as you are actively running a tcpdump? See the following from my book:

RX-DRP Culprit 1: Unknown or Undesired Protocol Type

In every Ethernet frame is a header field called “EtherType”. This field specifies the OSI

Layer 3 protocol that the Ethernet frame is carrying. A very common value for this

header field is 0x0800, which indicates that the frame is carrying an Internet Protocol

version 4 (IPv4) packet. Look at this excerpt from Stage 6 of “A Millisecond in the life

of a frame”:

Stage 6: At a later time the CPU begins SoftIRQ processing and looks in the ring

buffer. If a descriptor is present, the CPU retrieves the frame from the associated

receive socket buffer, clears the descriptor referencing the frame in the ring

buffer, and sends the frame to all “registered receivers” which will be the

SecureXL acceleration driver. If a tcpdump capture is currently running,

libpcap will also be a “registered receiver” in that case and get a copy of the

frame as well. The SoftIRQ processing continues until all ring buffers are

completely emptied, or various packet count or time limits have been reached.

During hardware interrupt processing, the NIC driver will examine the EtherType

field and verify there is a “registered receiver” present for the protocol specified in the

frame header. If there is not, the frame is discarded and RX-DRP is incremented.

Example: an Ethernet frame arrives with an EtherType of 0x86dd indicating the

presence of IPv6 in the Ethernet frame. If IPv6 has not been enabled on the firewall (it is

off by default), the frame will be discarded by the NIC driver and RX-DRP incremented.

What other protocols are known to cause this effect in the real world? Let’s take a look

at a brief sampling of other possible rogue EtherTypes you may see, that is by no means

complete:

- Appletalk (0x809b)

- IPX (0x8137 or 0x8138)

- Ethernet Flow Control (0x8808) if NIC flow control is disabled

- Jumbo Frames (0x8870) if the firewall is not configured to process jumbo frames

The dropping of these protocols for which there is no “registered receiver” does

cause a very small amount of overhead on the firewall during hardware interrupt

processing, but unless the number of frames discarded in this way exceeds 0.1% of all

inbound packets, you probably shouldn’t worry too much about it. An easy way to

confirm that the lack of a registered receiver is the cause of RX-DRPs is to perform the

following test:

1. In a SSH or terminal window, run watch -d netstat -ni and confirm

the constant incrementing of RX-DRP on (interface).

2. In a second SSH session, run tcpdump -ni (interface) host 1.1.1.1

Does the near constant incrementing of RX-DRP on that interface suddenly stop as

long as the tcpdump is still running, and resume when the tcpdump is stopped? If so,

the lack of a registered receiver is indeed the cause of the RX-DRPs. The specified filter

expression (host 1.1.1.1 in our example) does not actually matter, since libpcap will

register to receive all protocols on behalf of the running tcpdump, and then filter the

packets based on the provided tcpdump expression. So as long as the tcpdump is

running, there is essentially a registered received for everything.

now available at maxpowerfirewalls.com

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Yes, the drops stop immediately with the tcpdump. This suggests it is indeed other packets as per the Red Hat article - and your comments. Given that cpview on R80.40 reports the drops the same way as ifconfig (the number matches), I think it makes sense for sk61922 to be updated to clarify that this is expected behaviour.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

On a CheckPoint appliance running R80.40, RX-DRP from ifconfig, cpview and ethtool are in complete sync. Must be something from virtualization itself in your case.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

This issue is also apparent on appliances too, I do not believe it is as a result of ESXi configuration.

I have a 3200 appliance running in standalone mode that is connected to my home network but at present is not passing any traffic, just simply connected to my network. Since rebooting it yesterday morning, "ifconfig Mgmt" reports RX packets of 823818 packets, dropped packets of 150786, ie 18.3% of RX packets received were dropped. "ip -s link show Mgmt" shows identical figures.

The interface is connected to my switch via an access port, ie no vlan tagging is active on the switch port.

This morning I created a new vlan on my switch and made the switch port a member of that vlan, effectively isolating the appliance from the rest of the network. Having done so, the RX drops have stopped.

This seems to concur with the root cause as in the \ Redhat article that Paul referred to in the original post where it is stated that the RHEL7 kernel contains code that updates the rx_dropped counter for other non-error conditions, eg "Packets received with unknown or unregistered protocols". When the gateway is on active vlan tcpdump reveals a high level of shows a lot of "ethertype Unknown" packet, these are from things like access point broadcasts, Sonos speakers etc.

While it could be argued that this is academic, when attempting to isolate a performance problems, often packet drops would be used as an indicator of an issue, and if ifconfig, ip and cpview are all reporting a consistent message in terms of drops, how can this easily be discounted?

As Paul has suggested, as an absolute minimum, sk61922 should be updated to advise of this change in behaviour for R80.40.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

RHEL5 (2.6.18) did the same thing with RX-DRPs, and it was mentioned in the first edition of my book way back in 2015 then expanded upon in the third edition with the tcpdump trick, since I kept seeing it over and over. Perhaps RHEL7 (3.10) has changed how it counts them or something but this has been going on for a long time.

For sk61922 it should be mentioned that if there are RX-DRPs, yet no corresponding increase in counters rx_no_buffer_count and/or rx_missed_errors, it is just irrelevant traffic getting dropped by the NIC driver and is not a performance concern. This will also affect @HeikoAnkenbrand's tool that counts RX-DRPs in isolation and alerts if they are above 0.1%. Tagging the master of SK's @PhoneBoy for an update request.

now available at maxpowerfirewalls.com

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Thanks @Timothy_Hall

here are the links to the oneliners:

- ONELINER - Interfaces with RX-ERR, RX-DRP and RX-OVR Errors

- ONELINER - All Physical Interface States in one Overview

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Actually the person you should tag is @Ronen_Zel 🙂

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I was talking that on CheckPoint appliance values for RX-DRP are the same across all the tools, not that it is not possible to have RX-DRPs for the same reason as on when it is ESXi.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi there,

I have the same issue after migrating an open server fro R80.30 to R80.40.

There are increasing numbers of rx-drops on only one of the interfaces, and no sign of error with ethtool.

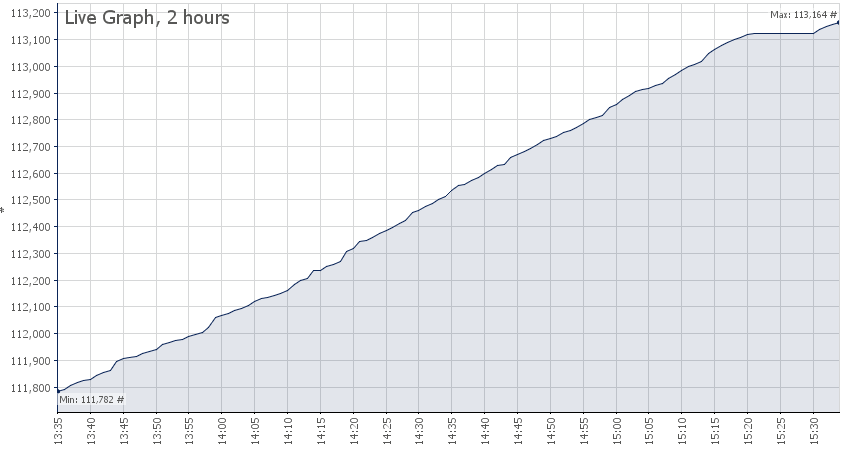

You can see the flat part at the where tcpdump was running for 10 minutes.

There are no performance impacts or other drops, but it is annoying to see errors increasing without any reason.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Yes, the updated NIC drivers in Gaia 3.10 that you are now using seem much more likely to report unknown EtherType frames as RX-DRPs. I don't think there is any way to disable this behavior, other than trying to keep that unknown traffic from being sent or somehow filtering it out before it reaches the firewall.

now available at maxpowerfirewalls.com